Our ability to detect, amplify, and sequence tiny amount of DNA has lead to a scientific revolution. We can now take a small sample of water from a lake, and by analyzing the environmental DNA in that water determine all of the things that live in the lake. This is an amazingly powerful tool. My favorite application of this technique was to demonstrate the absence of DNA in Loch Ness from any giant reptile or aquatic dinosaur. So-called eDNA is perhaps the most powerful evidence of a negative, the absence of a creature in an environment – you can’t hide your eDNA.

Our ability to detect, amplify, and sequence tiny amount of DNA has lead to a scientific revolution. We can now take a small sample of water from a lake, and by analyzing the environmental DNA in that water determine all of the things that live in the lake. This is an amazingly powerful tool. My favorite application of this technique was to demonstrate the absence of DNA in Loch Ness from any giant reptile or aquatic dinosaur. So-called eDNA is perhaps the most powerful evidence of a negative, the absence of a creature in an environment – you can’t hide your eDNA.

The ultimate limiting factor on eDNA is how long such DNA will survive. DNA has a half-life, it spontaneously degrades and sheds information, until it is no longer useful for sequencing. Previously scientists extracted DNA from ice cores in Greenland, and were able to sequence DNA up to 800,000 years old. The oldest DNA ever recovered was probably 1.1-1.2 million years old. Based on this scientists estimated that the ultimate lifespan of usable DNA was about 1 million years. This put the final nail in the coffin of any dreams of a Jurassic park. Non-avian dinosaurs died out 65 million years ago, so none of their DNA should still be left on Earth (the closest we can get is related DNA in birds). But no T. rex DNA in amber.

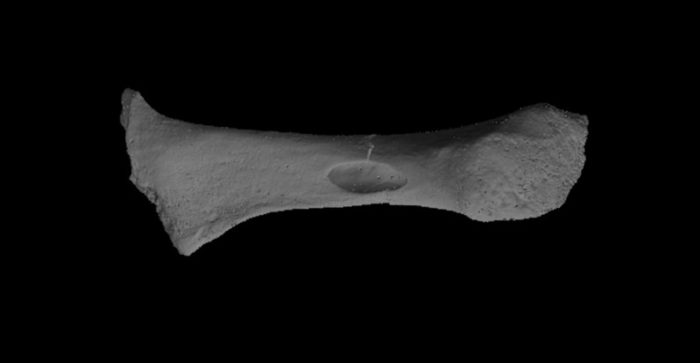

According to a new assay in the most norther region of Greenland, however, we have to push back the estimate of how long DNA can survive to at least 2 million years. That is a significant increase (but still a long way from T. rex). The site is Kap København Formation located in Peary Land in north Greenland. This is now a barren frozen desert. There are also very few macrofossils here, mostly from a boreal forest and insects, with the only vertebrate being a hare’s tooth. Conditions there are apparently not conducive to fossilization. We do know that 2 million years ago Greenland was much warmer, about 10 degrees C warmer than present. So there is no reason it should not have been teeming with life.

The new analysis of eDNA finds that, in fact, it was. They found DNA from hares, but also other rodents, reindeer, geese, and mastodons. They also found DNA from poplars, birch trees, and thuja trees (a type of coniferous tree), as well as a rich assortment of bushes, herbs, and other flora. Basically this was a mixed forest with a rich ecosystem. In addition they found marine species including horseshoe crab and green algae, confirming the warmer climate.

This ancient eDNA gives us a much more complete picture of the ecosystem than was provided by macrofossils alone. But perhaps more importantly – it demonstrates that eDNA can survive for up to two million years, doubling the previous estimate. The researchers speculate that minerals in the soil bound to the DNA and stabilized it, slowing its degradation. DNA is negatively charged. This property is used to separate out chunks of DNA in a sample by size. You apply a magnetic field which attracts the DNA pieces, which move through a gel at a range proportional to their size. In this case the negatively charged DNA bound to positively charged minerals in the soil. I guess this is the DNA version of fossilization.

The question is – in such environments where DNA is stabilized by binding to minerals, how much is the degradation process slowed down, and therefore how long can DNA survive? DNA breaks down due to “microbial enzymatic activity, mechanical shearing and spontaneous chemical reactions such as hydrolysis and oxidation.” DNA breaks down faster with warmer temperature, so the fact that this DNA remained frozen for so long is crucial. But freezing alone was not enough, which is why scientists think that binding to minerals also played a role.

They measured the “thermal age” of the DNA – if the DNA were at a constant temperature of 10 degrees C how long would it have taken to degrade to its current state – at 2.7 thousand years, 741 times less than its actual age of 2 million years. Therefore it degraded 741 times slower then exposed DNA at 10 degrees C. The average temperature at the site is -17 degrees C. They further found that the DNA was bound mostly to clay minerals, and specifically smectite (and to a lesser degree, quartz).

Perhaps this is the limit of DNA survival – although we thought the previous record of 1.1-1.2 million years was the limit. It is possible there may be environmental conditions elsewhere in the world that could slow DNA degradation even further. Slow DNA degradation by a factor of 30 or so beyond the Kap København Formation and we are getting into the time of dinosaurs. This is probably unlikely. Constant freezing temperatures are required, in addition to geological stability and optimal soil conditions. But I don’t think we can say now that it is impossible, just highly unlikely. I did not see any estimate in the study about the ultimate upper limit of DNA lifespan, but I suspect we will see such analyses based on this latest information.

The best evidence, however, will come from simply looking in new locations for eDNA, especially those that likely have the optimal conditions for maximal DNA longevity. But for now, being able to reconstruct ecosystems from 2 million years ago is still pretty cool.

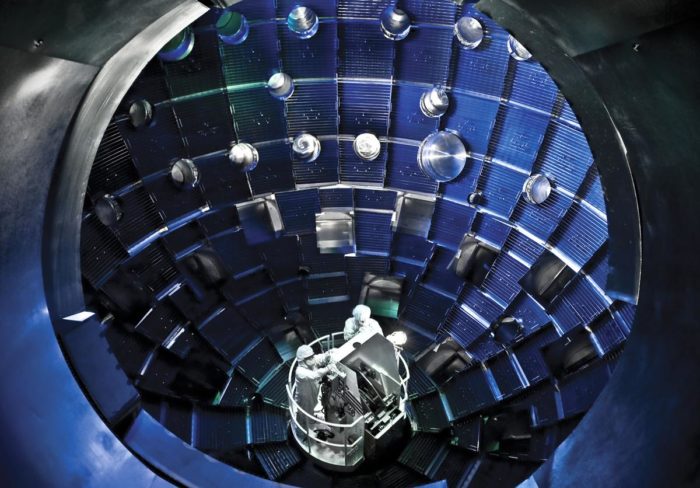

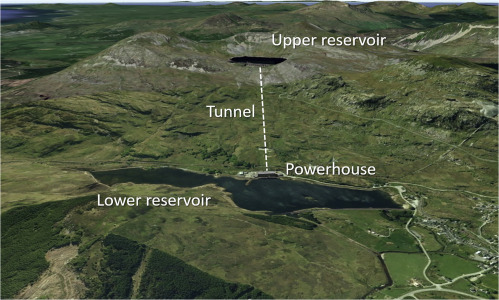

Much of the discussion about how we are going to rapidly change over our energy infrastructure to low carbon energy involves existing technology, or at most incremental advancements. The problem is, of course, that we are up against the clock and the best solutions are ones that we can implement immediately. Even next generation fission reactors are controversial because they are not a tried-and-true technology, even though fission technology itself is. It certainly would not be prudent to count on an entirely new technology as our solution. If some game-changing technology emerges, great, but until then we will make due with what we know works.

Much of the discussion about how we are going to rapidly change over our energy infrastructure to low carbon energy involves existing technology, or at most incremental advancements. The problem is, of course, that we are up against the clock and the best solutions are ones that we can implement immediately. Even next generation fission reactors are controversial because they are not a tried-and-true technology, even though fission technology itself is. It certainly would not be prudent to count on an entirely new technology as our solution. If some game-changing technology emerges, great, but until then we will make due with what we know works.

Our ability to detect, amplify, and sequence tiny amount of DNA has lead to a scientific revolution. We can now take a small sample of water from a lake, and by analyzing the environmental DNA in that water determine all of the things that live in the lake. This is an amazingly powerful tool. My favorite application of this technique was to demonstrate

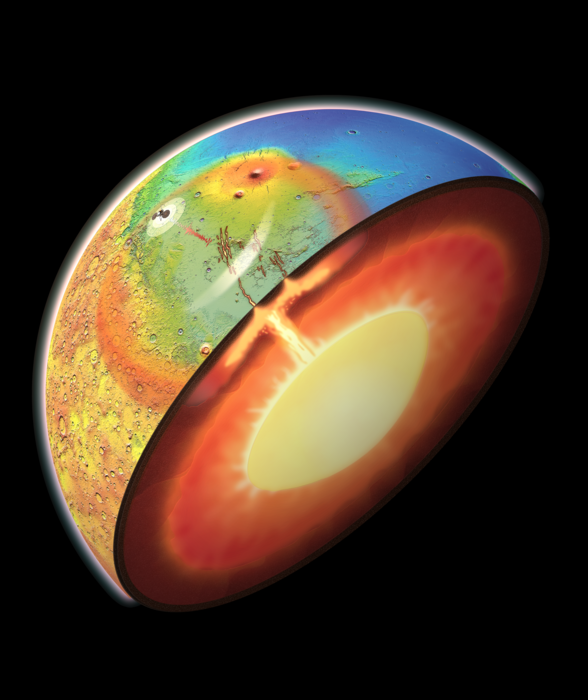

Our ability to detect, amplify, and sequence tiny amount of DNA has lead to a scientific revolution. We can now take a small sample of water from a lake, and by analyzing the environmental DNA in that water determine all of the things that live in the lake. This is an amazingly powerful tool. My favorite application of this technique was to demonstrate  Mars is perhaps the best candidate world in our solar system for a settlement off Earth. Venus is too inhospitable. The Moon is a lot closer, but the extremely low gravity (.166 g) is a problem for long-term habitation. Mars gravity is 0.38 g, still low by Earth standards but better than the Moon. But there are some other differences between Earth and Mars. Mars has only a very thin atmosphere, less than 1% that of Earth’s. That’s just enough to cause annoying sand storms, but not enough to avoid the need for pressure suits. Mars lost its atmosphere because it was stripped away by the solar wind – because Mars also does not have a global magnetic field to protect itself. The thin atmosphere and lack of magnetic field also exposes the surface to lots of radiation.

Mars is perhaps the best candidate world in our solar system for a settlement off Earth. Venus is too inhospitable. The Moon is a lot closer, but the extremely low gravity (.166 g) is a problem for long-term habitation. Mars gravity is 0.38 g, still low by Earth standards but better than the Moon. But there are some other differences between Earth and Mars. Mars has only a very thin atmosphere, less than 1% that of Earth’s. That’s just enough to cause annoying sand storms, but not enough to avoid the need for pressure suits. Mars lost its atmosphere because it was stripped away by the solar wind – because Mars also does not have a global magnetic field to protect itself. The thin atmosphere and lack of magnetic field also exposes the surface to lots of radiation. Construction begins this week on what will be the largest radio telescope in the world –

Construction begins this week on what will be the largest radio telescope in the world –  Yesterday

Yesterday  People tend to understand the world through the development of narratives – we tell stories about the past, the present, ourselves, others, and the world. That is how we make sense of things. I always find it interesting, the many and often subtle ways in which our narratives distort reality. One common narrative is that the past was simpler and more primitive than it actually was, and that progress is linear, objective, and inevitable. I remember watching

People tend to understand the world through the development of narratives – we tell stories about the past, the present, ourselves, others, and the world. That is how we make sense of things. I always find it interesting, the many and often subtle ways in which our narratives distort reality. One common narrative is that the past was simpler and more primitive than it actually was, and that progress is linear, objective, and inevitable. I remember watching  There is some good new when it comes to decarbonizing our civilization (reducing the amount of CO2 from previously sequestered carbon that our industries release into the atmosphere) – we already have the technology to accomplish most of what we need to do. Right now

There is some good new when it comes to decarbonizing our civilization (reducing the amount of CO2 from previously sequestered carbon that our industries release into the atmosphere) – we already have the technology to accomplish most of what we need to do. Right now  I have been writing a lot recently about global warming and energy infrastructure. This is partly because there is a lot of news coming out of COP27, but also because both here and on the SGU there has been some lively and informative discussion on the issue. Also, this is a very complex issue and as people raise new points it sends me down different rabbit holes of information. I am trying to develop the most complete and objective picture I can of the situation.

I have been writing a lot recently about global warming and energy infrastructure. This is partly because there is a lot of news coming out of COP27, but also because both here and on the SGU there has been some lively and informative discussion on the issue. Also, this is a very complex issue and as people raise new points it sends me down different rabbit holes of information. I am trying to develop the most complete and objective picture I can of the situation. There are now approximately 8 billion people on the planet. In addition, there are over

There are now approximately 8 billion people on the planet. In addition, there are over  There are some situations in which biology is still vastly superior to any artificial technology. Think about muscles. They are actually quite amazing. They can rapdily contract with significant force and then immediately relax. They can also vary their contraction strength smoothly along a wide continuum. Further, they are soft and silent. No machine can come close to their functionality.

There are some situations in which biology is still vastly superior to any artificial technology. Think about muscles. They are actually quite amazing. They can rapdily contract with significant force and then immediately relax. They can also vary their contraction strength smoothly along a wide continuum. Further, they are soft and silent. No machine can come close to their functionality.