May 18 2026

Privacy In a Digital World

It is old news that all the tech we now live with is constantly gathering data about us. It is important, however, not to become complacent about this or to assume the situation cannot or is not getting worse. Pretty much every piece of digital technology that we interact with likely is gathering some personal information about you which is used to target advertising and to sell to third parties. Regulations in most countries are inadequate and fail to keep up with technological changes.

One of the latest venues to soak up information about you may be surprising – your car. Cars are increasingly computerized, and they typically collect driving behavior data – how fast your drive, how hard you break, and how tight you make turns. But also, some vehicles have cameras facing the driver which means they can detect your behavior visually. Sometimes this is sold as a safety feature, to tell if you are too sleepy or inebriated to drive. Sometimes this is part of a system to get your insurance company to reduce your rates if you think you are a safe driver. But often it is done without disclosure. Recent GM was found guilty of collecting and selling such data without the permission of the user, and was banned for doing so for five years. But many other car manufacturers also do this.

All they really have to do is bury some disclosure deep in the user agreement, which functionally nobody reads, and they are covered. You may have the ability to opt-out of such data selling. Of course, putting the burden on the end user to find and read any such disclosures and then go through the steps necessary to opt out of data selling is a huge problem. In fact insurance companies will buy data from car companies and then use that data to increase your insurance premiums, without you opting into any of it.

The basic fact is that collecting data from users, packaging that data and then selling it to third parties is a huge industry. It is estimated that globally this is a $240 billion industry. When that kind of money is on the line, companies are going to do everything they can to capitalize on it, while avoiding legal issues by either flying under the radar or hiding behind legal fig leaves (like the buried consumer disclosures). They will also use that money to lobby the government to let them continue to do so, or even to mandate certain things that will help this industry. For example, some car monitoring technology is sold as a safety feature, and it can legitimately be used for this purpose. Others are convenience features, like GPS. But once all the sensors and cameras are in place, they will soak up all the data they can – because that data is worth billions.

I came across a few news items that I could possibly write about today and couldn’t decide which to cover, so I will write about all of them, since they all relate to renewable energy.

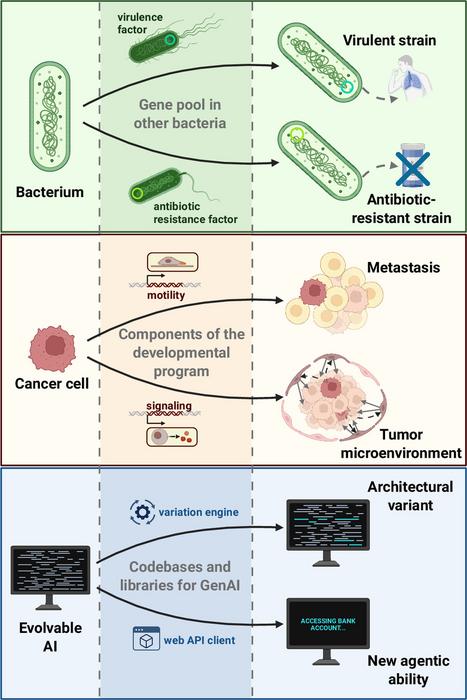

I came across a few news items that I could possibly write about today and couldn’t decide which to cover, so I will write about all of them, since they all relate to renewable energy. I thought everyone needed one more thing to worry about, so here you go: evolving AI. When I hear this phrase I think of two things. The first are AI systems designed to simulate organic evolution. The second are artificially intelligent systems that are capable of evolving themselves. That latter one is the type you need to worry about.

I thought everyone needed one more thing to worry about, so here you go: evolving AI. When I hear this phrase I think of two things. The first are AI systems designed to simulate organic evolution. The second are artificially intelligent systems that are capable of evolving themselves. That latter one is the type you need to worry about. The recent rapid advance in the capabilities of artificial intelligence (AI) applications I think qualifies as a disruptive technology. The term “disruptive technology” was popularized in

The recent rapid advance in the capabilities of artificial intelligence (AI) applications I think qualifies as a disruptive technology. The term “disruptive technology” was popularized in  Last week I wrote about the possibilities of

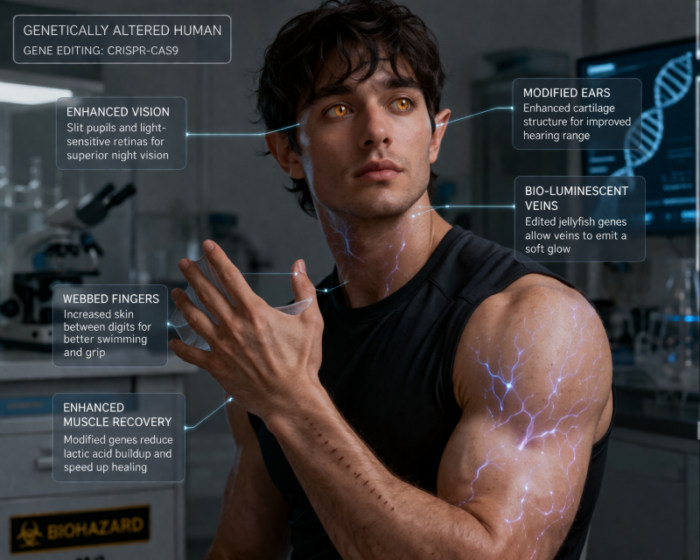

Last week I wrote about the possibilities of  Are we getting close to the time when parents would have the option of genetically engineering their children at the embryo stage? If so, is this a good thing, a bad thing, or both? In order for this to happen such engineering would need to be technically, legally, and commercially viable. Let’s take these in order, and then discuss the potential implications.

Are we getting close to the time when parents would have the option of genetically engineering their children at the embryo stage? If so, is this a good thing, a bad thing, or both? In order for this to happen such engineering would need to be technically, legally, and commercially viable. Let’s take these in order, and then discuss the potential implications. Many teachers are panicking over AI (artificial intelligence), and for good reason. This goes beyond students using AI to cheat on their homework or write their essays for them. If you have AI essentially think for you, then you will not learn to think. On the other hand

Many teachers are panicking over AI (artificial intelligence), and for good reason. This goes beyond students using AI to cheat on their homework or write their essays for them. If you have AI essentially think for you, then you will not learn to think. On the other hand  As we anticipate the Artemis II launch, now slated for early April with plans to take four astronauts on a trip around the Moon and back to Earth, NASA has been unveiling some significant changes to its plans for returning to the Moon and beyond. If you have fallen behind these announcements, here is a summary of the important bits.

As we anticipate the Artemis II launch, now slated for early April with plans to take four astronauts on a trip around the Moon and back to Earth, NASA has been unveiling some significant changes to its plans for returning to the Moon and beyond. If you have fallen behind these announcements, here is a summary of the important bits. In the decades before the Wright brothers historic 1903 flight at Kitty Hawk

In the decades before the Wright brothers historic 1903 flight at Kitty Hawk