Feb 09 2026

Uranium and Motivated Reasoning

This post is only partly about uranium, but mostly about motivated reasoning – our ability to harness our reasoning power not to arrive at the most likely answer, but to support the answer we want to be true. But let’s chat about uranium for a bit. In the comments to my recent article on a renewable grid, once commenter referred to a blog post on skeptical science and quoted:

“Abbott 2012, linked in the OP, lists about 13 reasons why nuclear will never be capable of generating a significant amount of power. Nuclear supporters have never addressed these issues. To me, the most important issue is there is not enough uranium to generate more than about 5% of all power.”

This is the flip side, I think, to the misinformation about renewable energy I was discussing in that post. Let me way, I don’t think there is an objective right answer here, but my personal view is that the pathway to net zero that emits the least amount of carbon includes nuclear energy, a view that is in line with the IPCC. There is, however, still a lot of anti-nuclear bias out there, just as their is pro-fossil fuel bias, and pro-renewable bias, and every kind of bias. If you want to make a case for any particular source of power, there are enough variables to play with that you can make a case. However, factual misstatements are different – we should at least be arguing from the same set of verified facts. So let’s address the question – how much uranium is there.

There is no objective answer to this question. Why not? Because it depends on your definition. Most estimates of how much uranium there is in the world, in the context of how much is available for nuclear power, do not include every atom of uranium. They generally take several approaches – how much is in current usable stockpiles, how much is being produced by active mines, and how much is “commercially” available. That last category depend on where you draw the line, which depends on the current price of uranium as well as the value of the energy it produces. If, for example, we decided to price the cost of emitting carbon from energy production, the value of uranium would suddenly increase. It also depends on the technology to extract and refine uranium. The value of uranium is also determined by the efficiency of reactors.

Engaging on social media to discuss pseudoscience can be exhausting, and make one weep for humanity. I have to keep reminding myself that what I am seeing is not necessarily representative. The loudest and most extreme voices tend to get amplified, and people don’t generally make videos just to say they agree with the mainstream view on something. There is massive selection bias. But still, to some extent social media does both reflect the culture and also influence it. So I like to not only address specific pieces of nonsense I find but also to look for patterns, patterns of claims and also of thought or narratives.

Engaging on social media to discuss pseudoscience can be exhausting, and make one weep for humanity. I have to keep reminding myself that what I am seeing is not necessarily representative. The loudest and most extreme voices tend to get amplified, and people don’t generally make videos just to say they agree with the mainstream view on something. There is massive selection bias. But still, to some extent social media does both reflect the culture and also influence it. So I like to not only address specific pieces of nonsense I find but also to look for patterns, patterns of claims and also of thought or narratives. As human civilization spreads into every corner of the world, human and animal territories are butting up against each other more intensely. This often doesn’t end well for the animals. This is also causing evolutionary pressures that are adapting some species to living in close proximity to humans.

As human civilization spreads into every corner of the world, human and animal territories are butting up against each other more intensely. This often doesn’t end well for the animals. This is also causing evolutionary pressures that are adapting some species to living in close proximity to humans. This is not really anything new, but it is taking on a new scope. The WSJ recently wrote about

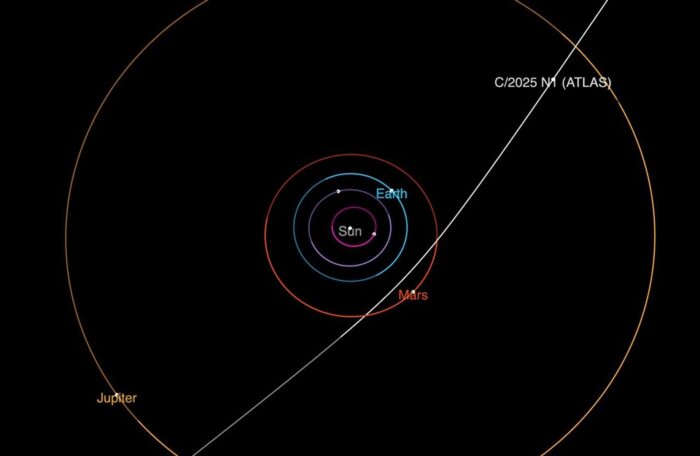

This is not really anything new, but it is taking on a new scope. The WSJ recently wrote about  Avi Loeb is at it again. He is the Harvard astrophysicist who first gained notoriety

Avi Loeb is at it again. He is the Harvard astrophysicist who first gained notoriety  One potentially positive outcome from the COVID pandemic is that it was a wakeup call – if there was any doubt previously about the fact that we all live in one giant interconnected world, it should not have survived the recent pandemic. This is particularly true when it comes to infectious disease. A bug that breaks out on the other side of the world can make its way to your country, your home, and cause havoc. It’s also not just about the spread of infectious organisms, but the breeding of these organisms.

One potentially positive outcome from the COVID pandemic is that it was a wakeup call – if there was any doubt previously about the fact that we all live in one giant interconnected world, it should not have survived the recent pandemic. This is particularly true when it comes to infectious disease. A bug that breaks out on the other side of the world can make its way to your country, your home, and cause havoc. It’s also not just about the spread of infectious organisms, but the breeding of these organisms. It’s probably not a surprise that a blog author dedicated to critical thinking and neuroscience feels that misinformation is one of the most significant threats to society, but I really to think this. Misinformation (false, misleading, or erroneous information) and disinformation (deliberately misleading information) have the ability to cause a disconnect between the public and reality. In a democracy this severs the feedback loop between voters and their representatives. In an authoritarian government it a tool of control and repression. In either case citizens cannot freely choose their representatives. This is also the problem with extreme jerrymandering – in which politicians choose their voters rather than the other way around.

It’s probably not a surprise that a blog author dedicated to critical thinking and neuroscience feels that misinformation is one of the most significant threats to society, but I really to think this. Misinformation (false, misleading, or erroneous information) and disinformation (deliberately misleading information) have the ability to cause a disconnect between the public and reality. In a democracy this severs the feedback loop between voters and their representatives. In an authoritarian government it a tool of control and repression. In either case citizens cannot freely choose their representatives. This is also the problem with extreme jerrymandering – in which politicians choose their voters rather than the other way around. A

A  At CSICON this year I gave talk about topics over which skeptics have and continue to disagree with each other. My core theme was that these are the topics we absolutely should be discussing with each other, especially at skeptical conferences. Nothing should be taboo or too controversial. We are an intellectual community dedicated to science and reason, and have spent decades talking about how to find common ground and resolve differences, when it comes to empirical claims about reality. But the fact is we sometimes disagree, and this is a great learning opportunity. It’s also humbling, reminding ourselves that the journey toward critical thinking and reason never ends. On several topics self-identified skeptics disagree largely along political grounds, which is a pretty sure sign we are not immune to ideology and partisanship.

At CSICON this year I gave talk about topics over which skeptics have and continue to disagree with each other. My core theme was that these are the topics we absolutely should be discussing with each other, especially at skeptical conferences. Nothing should be taboo or too controversial. We are an intellectual community dedicated to science and reason, and have spent decades talking about how to find common ground and resolve differences, when it comes to empirical claims about reality. But the fact is we sometimes disagree, and this is a great learning opportunity. It’s also humbling, reminding ourselves that the journey toward critical thinking and reason never ends. On several topics self-identified skeptics disagree largely along political grounds, which is a pretty sure sign we are not immune to ideology and partisanship. How certain are you of anything that you believe? Do you even think about your confidence level, and do you have a process for determining what your confidence level should be or do you just follow your gut feelings?

How certain are you of anything that you believe? Do you even think about your confidence level, and do you have a process for determining what your confidence level should be or do you just follow your gut feelings?