Jan

19

2026

Last week a child of one of my cohosts on the SGU, who is in fifth grade (the child, not the cohost), came home from school and declared, rather dramatically, “Mom, Dad – did you know that we never went to the Moon? It was all fake.” They found this to be a surprising revelation, but were convinced this was a proven scientific fact. Of course, we live in the age of the internet, and our children are going to be exposed to all sorts of information that may be misleading or age-inappropriate. This is one more thing parents have to deal with. What was disturbing about this incident was where they learned this “scientific fact” – from their science teacher.

Last week a child of one of my cohosts on the SGU, who is in fifth grade (the child, not the cohost), came home from school and declared, rather dramatically, “Mom, Dad – did you know that we never went to the Moon? It was all fake.” They found this to be a surprising revelation, but were convinced this was a proven scientific fact. Of course, we live in the age of the internet, and our children are going to be exposed to all sorts of information that may be misleading or age-inappropriate. This is one more thing parents have to deal with. What was disturbing about this incident was where they learned this “scientific fact” – from their science teacher.

Any parent should be concerned about this, but in a family of skeptical science communicators, this raised the alarm bells. But the first thing they did was send a polite e-mail to the teacher (cc’ing the principal) and simply ask what happened. This is good practice – always go to the primary source. It’s easy for anyone to get the wrong idea, and this wouldn’t be the first time a fifth grader misinterpreted a lesson in class. The teacher essentially said that while he did not explicitly tell the students we did not go to the Moon (the student reports he said “it’s possible we did not go to the Moon”), he personally believes we did not, and that it is a “proven scientific fact” that it would have been impossible, then and now, to send people to the Moon (somebody should tell the Artemis astronauts).

Apparently he raised at least two points in class – that there were (impossibly) no stars in the background of the photographs taken from the Moon, and the astronauts could not have survived passage through the radiation belts around the Earth. These are both old and long-debunked claims of the Moon-hoax conspiracy theorists. While it is easy to find sources online, let me briefly summarize why these claims are wrong.

Continue Reading »

Mar

17

2025

A recent BBC article reminded me of one of my enduring technology disappointments over the last 40 years – the failure of the educational system to reasonably (let alone fully) leverage multimedia and computer technology to enhance learning. The article is about a symposium in the UK about using AI in the classroom. I am confident there are many ways in which AI can enhance learning efficacy in the classroom, just as I am confident that we collectively will fail to utilize AI anywhere nears its potential. I hope I’m wrong, but it’s hard to shake four decades of consistent disappointment.

A recent BBC article reminded me of one of my enduring technology disappointments over the last 40 years – the failure of the educational system to reasonably (let alone fully) leverage multimedia and computer technology to enhance learning. The article is about a symposium in the UK about using AI in the classroom. I am confident there are many ways in which AI can enhance learning efficacy in the classroom, just as I am confident that we collectively will fail to utilize AI anywhere nears its potential. I hope I’m wrong, but it’s hard to shake four decades of consistent disappointment.

What am I referring to? Partly it stems from the fact that in the 1980s and 1990s I had lots of expectations about what future technology would bring. These expectations were born of voraciously reading books, magazines, and articles and watching documentaries about potential future technology, but also from my own user experience. For example, starting in high school I became exposed to computer programs (at first just DOS-based text programs) designed to teach some specific body of knowledge. One program that sticks out walked the user through the nomenclature of chemical reactions. It was a very simple program, but it “gamified” the learning process in a very effective way. By providing immediate feedback, and progressing at the individual pace of the user, the learning curve was extremely steep.

This, I thought to myself, was the future of education. I even wrote my own program in basic designed to teach math skills to elementary schoolers, and tested it on my friend’s kids with good results. It followed the same pattern as the nomenclature program: question-response-feedback. I feel confident that my high school self would be absolutely shocked to learn how little this type of computer-based learning has been incorporated into standard education by 2025.

When my daughters were preschoolers I found every computer game I could that taught colors, letters, numbers, categories, etc., again with good effect. But once they got to school age, the resources were scarce and almost nothing was routinely incorporated into their education. The school’s idea of computer-based learning was taking notes on a laptop. I’m serious. Multimedia was also a joke. The divide between what was possible and what was reality just continued to widen. One of the best aspects of social media, in my opinion, is tutorial videos. You can often find much better learning on YouTube than in a classroom.

Continue Reading »

Jul

09

2024

In an optimally rational person, what should govern their perception of risk? Of course, people are generally not “optimally rational”. It’s therefore an interesting thought experiment – what would be optimal, and how does that differ from how people actually assess risk? Risk is partly a matter of probability, and therefore largely comes down to simple math – what percentage of people who engage in X suffer negative consequence Y? To accurately assess risk, you therefore need information. But that is not how people generally operate.

In an optimally rational person, what should govern their perception of risk? Of course, people are generally not “optimally rational”. It’s therefore an interesting thought experiment – what would be optimal, and how does that differ from how people actually assess risk? Risk is partly a matter of probability, and therefore largely comes down to simple math – what percentage of people who engage in X suffer negative consequence Y? To accurately assess risk, you therefore need information. But that is not how people generally operate.

In a recent study assessment of the risk of autonomous vehicles was evaluated in 323 US adults. This is a small study, and all the usual caveats apply in terms of how questions were asked. But if we take the results at face value, they are interesting but not surprising. First, information itself did not have a significant impact on risk perception. What did have a significant impact was trust, or more specifically, trust had a significant impact on the knowledge and risk perception relationship.

What I think this means is that knowledge alone does not influence risk perception, unless it was also coupled with trust. This actually makes sense, and is rational. You have to trust the information you are getting in order to confidently use it to modify your perception of risk. However – trust is a squirrely thing. People tend not to trust things that are new and unfamiliar. I would consider this semi-rational. It is reasonable to be cautious about something that is unfamiliar, but this can quickly turn into a negative bias that is not rational. This, of course, goes beyond autonomous vehicles to many new technologies, like GMOs and AI.

Continue Reading »

May

18

2023

There is an ongoing culture war, and not just in the US, over the content of childhood education, both public and private. This seems to be flaring up recently, but is never truly gone. Republicans in the US have recently escalated this war by banning over 500 books in several states (mostly Florida) because they contain “inappropriate” content. There are a few issues worth exploring here.

There is an ongoing culture war, and not just in the US, over the content of childhood education, both public and private. This seems to be flaring up recently, but is never truly gone. Republicans in the US have recently escalated this war by banning over 500 books in several states (mostly Florida) because they contain “inappropriate” content. There are a few issues worth exploring here.

First, I think it is an important premise to recognize the value of public education. As educator Dana Mitra summarizes:

Research shows that individuals who graduate and have access to quality education throughout primary and secondary school are more likely to find gainful employment, have stable families, and be active and productive citizens. They are also less likely to commit serious crimes, less likely to place high demands on the public health care system, and less likely to be enrolled in welfare assistance programs. A good education provides substantial benefits to individuals and, as individual benefits are aggregated throughout a community, creates broad social and economic benefits.

Continue Reading »

May

15

2023

Researchers recently published an extensive survey of almost 6,000 students across academic institution in Sweden. The results are not surprising, but they do give a snapshot of where we are with the recent introduction of large language model AIs.

Researchers recently published an extensive survey of almost 6,000 students across academic institution in Sweden. The results are not surprising, but they do give a snapshot of where we are with the recent introduction of large language model AIs.

Most students, 56%, reported that they use Chat GPT in their studies, and 35% regularly. More than half do not know if their school has guidelines on AI use in their classwork, and 62% believe that using a chatbot during an exam is cheating (so 38% do not think that). What this means is that most students are using AI for their classwork, but they don’t know what the rules are and are unclear on what would constitute cheating. Also, almost half of students think that using AI makes them more efficient learners, and many commented that they feel it has improved their own language and thinking skills.

So – is the use of AI in education a bane or a boon? Of course, asking students is only one window into this question. Educators have concerns about AI creating a lazy student, that can serve up good-enough answers to get by. There are also concerns about outright cheating, although that has to be carefully defined. Some teachers don’t know how to react when students turn in essays that appear to have been written by a chat bot. But many also think there is tremendous potential is using AI as an educational tool.

Clearly the availability of the latest generation of large language model AIs is a disruptive technology. Schools are now scrambling to deal with it, but I think they have no choice. Students are moving fast, and if schools don’t keep up they will miss an opportunity and fail to mitigate the potential downsides. What is clear is that AI has the potential to significantly change education. Simplistic solutions like just banning the use of AI is not going to work.

Continue Reading »

Oct

27

2022

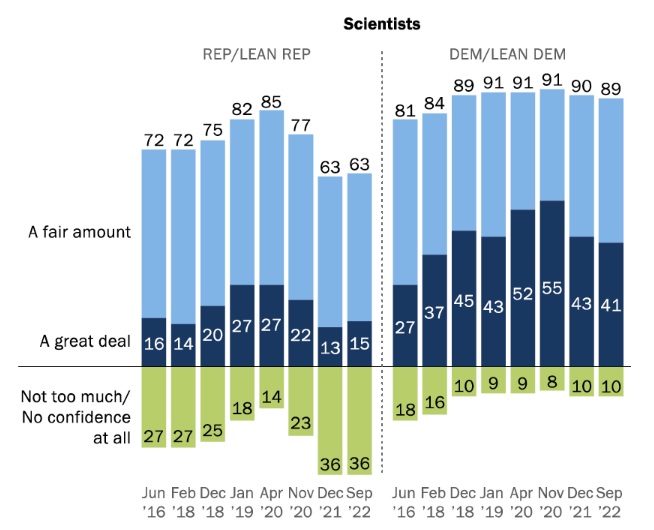

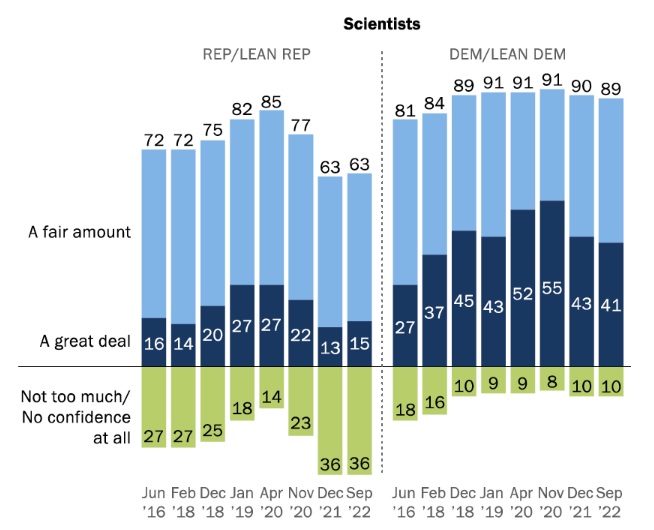

A new Pew survey updates their data on American’s trust in scientists. The “good news” is that overall, trust remains high, with 77% saying they trust scientists a great deal or a fair amount, and only 23% not too much or not at all. Actually, when you think about it these numbers are still pretty bad, but they seem good because our expectations are so low. More than one in five people don’t trust scientists. For more perspective, that 77% figure is the same for the military. The highest rated group was medical scientists at 80%. Elected officials were at 28%.

A new Pew survey updates their data on American’s trust in scientists. The “good news” is that overall, trust remains high, with 77% saying they trust scientists a great deal or a fair amount, and only 23% not too much or not at all. Actually, when you think about it these numbers are still pretty bad, but they seem good because our expectations are so low. More than one in five people don’t trust scientists. For more perspective, that 77% figure is the same for the military. The highest rated group was medical scientists at 80%. Elected officials were at 28%.

These numbers are also fairly stable over time. Interestingly they did bump up a bit during the pandemic, but then quickly returned to their historical levels. Some argue that these numbers are pretty good and we shouldn’t “freak out about the minority.” I disagree – not that we should freak out, but we do need to take these numbers seriously, and they are not necessarily good news.

One reason I am still concerned about these numbers is that there is a pretty significant partisan divide. Recent years have Democrats at around 90% with Republicans around 63%. More than a third of one major political party does not trust scientists, and they seem to be the political center of the party. This gets even worse if you look at the question of whether or not scientists should play an active role in policy debates. Only 66% of Democrats say yes, and only 29% of Republicans (down from 75 and 43 respectively). This, to me, is very telling. It’s one thing to say you trust scientists, but what does that mean that you also don’t want them to play an active role in policy?

Continue Reading »

Oct

25

2022

At a recent talk, during the Q&A an audience member asked me what I thought the consequences would be of the “idiocracy” we seem to be heading toward. I challenged the premise, that people in general are becoming less intelligent. I know it may superficially seem like this, but that has more to do with media savvy, echochambers, tribalism, and radicalization, not any demonstrable decline in raw intelligence.

At a recent talk, during the Q&A an audience member asked me what I thought the consequences would be of the “idiocracy” we seem to be heading toward. I challenged the premise, that people in general are becoming less intelligent. I know it may superficially seem like this, but that has more to do with media savvy, echochambers, tribalism, and radicalization, not any demonstrable decline in raw intelligence.

In fact, I pointed out, in the last century there has been a consistent increase in IQ testing ability, by about 3 IQ points per decade (called the Flynn Effect). There is still debate about what this means, and it is important to point out that IQ testing does not equal “intelligence” which is multifaceted. But whatever standard IQ tests are measuring, performance is generally improving over time. Another measure, that of civic scientific literacy (in a longstanding series of studies by Jon Miller), increased from 1988 t0 2008 from 9% to 29%. It has since plateaued at that level.

There is no consensus as to why this is so, but I have some thoughts based on the literature. Technology is exposing people to more information, and this has only been increasing further with the advent of computers, the internet, and even social media. Regardless of any negative effects, people seem to know more stuff, and have improved problem-solving skills. Our brains are busier, they are exposed to more ideas and facts, we interact with a greater number of different people and opinions, and we have to interface with technology and information more. The workforce is shifting from manual labor to more intellectual labor. Even just going through your normal day likely involves interacting with technology that would have befuddled older generations.

Continue Reading »

Oct

01

2021

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

So it was reasonable, especially in the early days of the pandemic, to convert schooling to online classes until the pandemic was under control. The problem was – most schools were simply not ready for this transition. The worst problem were those student who did not have access to a computer and the internet from home. The pandemic helped expose and exacerbate the digital divide. But even for students with good access, the experience was generally not good. Many teachers were not prepared to adapt their classes for online learning. Many parents did not have ability to stay at home with their kids to monitor them. And many children were simply bored and not learning.

This is a classic infrastructure problem. Many technologies do not function well in a vacuum. You can’t have cars without roads, traffic control, licensing, safety regulations, and fueling stations. Mass online learning also requires significant infrastructure that we simply didn’t have.

Continue Reading »

Aug

26

2021

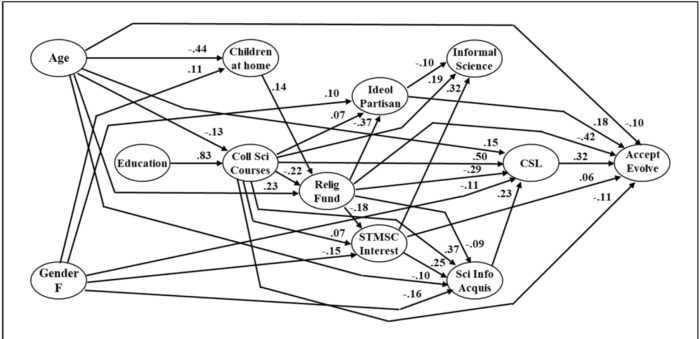

The idea that all life on Earth is related through a nested hierarchy of branching evolution, occurring over billions of years through entirely natural processes, is one of the biggest ideas ever to emerge from human science. It did not just emerge whole cloth from the brain of Charles Darwin, it had been percolating in the scientific community for decades. Darwin, however, put it all together in one long compelling argument. Alfred Wallace independently came up with essentially the same conclusion, although did not develop it as far as Darwin.

The idea that all life on Earth is related through a nested hierarchy of branching evolution, occurring over billions of years through entirely natural processes, is one of the biggest ideas ever to emerge from human science. It did not just emerge whole cloth from the brain of Charles Darwin, it had been percolating in the scientific community for decades. Darwin, however, put it all together in one long compelling argument. Alfred Wallace independently came up with essentially the same conclusion, although did not develop it as far as Darwin.

On the Origin of Species was published in 1859, and it quickly won over the scientific community, with natural selection acting on variation becoming the dominant working hypothesis. But that, of course, was not the end of the story, only the beginning. If Darwin’s ideas were wrong, they would have slowly withered from lack of confirming evidence. But they were largely correct, even insightful. The last 162 years of research and observation have confirmed to an extraordinary degree the core ideas that life is related through branching connections, and that natural selection is a primary driving force of evolution. The theory has also evolved quite a bit, and is now a mature and complex scientific discipline sitting on top of mountains of evidence, including fossils, genetics, comparative anatomy, developmental biology, and direct observation. The basic fact of evolution could have been falsified thousands of times over, but it has survived every time – because it is essentially true.

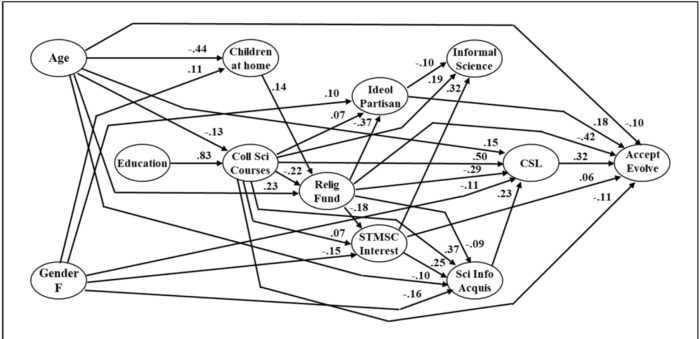

Acceptance of the basic tenets of evolutionary theory, therefore, is a good litmus test for any modern society. Of what, exactly, is another question, but certainly something is going wrong if the population does not accept this overwhelming scientific consensus. The US ranks second from the bottom (only Turkey is worse) in terms of accepting evolutionary theory. Researchers have been tracking the statistics for decades, and now some of the lead researchers in this field have published data from 1985 to 2020 (sorry it’s behind a paywall). There are some interesting details to pull from the numbers.

Continue Reading »

Sep

22

2020

The issue of genetically modified organisms is interesting from a science communication perspective because it is the one controversy that apparently most follows the old knowledge deficit paradigm. The question is – why do people reject science and accept pseudoscience. The knowledge deficit paradigm states that they reject science in proportion to their lack of knowledge about science, which should therefore be fixable through straight science education. Unfortunately, most pseudoscience and science denial does not follow this paradigm, and are due to other factors such as lack of critical thinking, ideology, tribalism, and conspiracy thinking. But opposition to GMOs does appear to largely result from a knowledge deficit.

The issue of genetically modified organisms is interesting from a science communication perspective because it is the one controversy that apparently most follows the old knowledge deficit paradigm. The question is – why do people reject science and accept pseudoscience. The knowledge deficit paradigm states that they reject science in proportion to their lack of knowledge about science, which should therefore be fixable through straight science education. Unfortunately, most pseudoscience and science denial does not follow this paradigm, and are due to other factors such as lack of critical thinking, ideology, tribalism, and conspiracy thinking. But opposition to GMOs does appear to largely result from a knowledge deficit.

A 2019 study, in fact, found that as opposition to GM technology increased, scientific knowledge about genetics and GMOs decreased, but self-assessment increased. GMO opponents think they know the most, but in fact they know the least. Other studies show that consumers have generally low scientific knowledge about GMOs. There is also evidence that fixing the knowledge deficit, for some people, can reduce their opposition to GMOs (at least temporarily). We clearly need more research, and also different people oppose GMOs for different reasons, but at least there is a huge knowledge deficit here and reducing it may help.

So in that spirit, let me reduce the general knowledge deficit about GMOs. I have been tackling anti-GMO myths for years, but the same myths keep cropping up (pun intended) in any discussion about GMOs, so there is still a lot of work to do. To briefly review – no farmer has been sued for accidental contamination, farmers don’t generally save seeds anyway, there are patents on non-GMO hybrid seeds, GMOs have been shown to be perfectly safe, GMOs did not increase farmer suicide in India, and use of GMOs generally decreases land use and pesticide use.

Continue Reading »

Last week a child of one of my cohosts on the SGU, who is in fifth grade (the child, not the cohost), came home from school and declared, rather dramatically, “Mom, Dad – did you know that we never went to the Moon? It was all fake.” They found this to be a surprising revelation, but were convinced this was a proven scientific fact. Of course, we live in the age of the internet, and our children are going to be exposed to all sorts of information that may be misleading or age-inappropriate. This is one more thing parents have to deal with. What was disturbing about this incident was where they learned this “scientific fact” – from their science teacher.

Last week a child of one of my cohosts on the SGU, who is in fifth grade (the child, not the cohost), came home from school and declared, rather dramatically, “Mom, Dad – did you know that we never went to the Moon? It was all fake.” They found this to be a surprising revelation, but were convinced this was a proven scientific fact. Of course, we live in the age of the internet, and our children are going to be exposed to all sorts of information that may be misleading or age-inappropriate. This is one more thing parents have to deal with. What was disturbing about this incident was where they learned this “scientific fact” – from their science teacher.

A

A In an optimally rational person, what should govern their perception of risk? Of course, people are generally not “optimally rational”. It’s therefore an interesting thought experiment – what would be optimal, and how does that differ from how people actually assess risk? Risk is partly a matter of probability, and therefore largely comes down to simple math – what percentage of people who engage in X suffer negative consequence Y? To accurately assess risk, you therefore need information. But that is not how people generally operate.

In an optimally rational person, what should govern their perception of risk? Of course, people are generally not “optimally rational”. It’s therefore an interesting thought experiment – what would be optimal, and how does that differ from how people actually assess risk? Risk is partly a matter of probability, and therefore largely comes down to simple math – what percentage of people who engage in X suffer negative consequence Y? To accurately assess risk, you therefore need information. But that is not how people generally operate. There is an ongoing culture war, and not just in the US, over the content of childhood education, both public and private. This seems to be flaring up recently, but is never truly gone. Republicans in the US have recently escalated this war by banning over 500 books in several states (mostly Florida) because they contain “inappropriate” content. There are a few issues worth exploring here.

There is an ongoing culture war, and not just in the US, over the content of childhood education, both public and private. This seems to be flaring up recently, but is never truly gone. Republicans in the US have recently escalated this war by banning over 500 books in several states (mostly Florida) because they contain “inappropriate” content. There are a few issues worth exploring here. Researchers recently published

Researchers recently published  A

A  At a recent talk, during the Q&A an audience member asked me what I thought the consequences would be of the “idiocracy” we seem to be heading toward. I challenged the premise, that people in general are becoming less intelligent. I know it may superficially seem like this, but that has more to do with media savvy, echochambers, tribalism, and radicalization, not any demonstrable decline in raw intelligence.

At a recent talk, during the Q&A an audience member asked me what I thought the consequences would be of the “idiocracy” we seem to be heading toward. I challenged the premise, that people in general are becoming less intelligent. I know it may superficially seem like this, but that has more to do with media savvy, echochambers, tribalism, and radicalization, not any demonstrable decline in raw intelligence. There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe. The idea that all life on Earth is related through a nested hierarchy of branching evolution, occurring over billions of years through entirely natural processes, is one of the biggest ideas ever to emerge from human science. It did not just emerge whole cloth from the brain of Charles Darwin, it had been percolating in the scientific community for decades. Darwin, however, put it all together in one long compelling argument. Alfred Wallace independently came up with essentially the same conclusion, although did not develop it as far as Darwin.

The idea that all life on Earth is related through a nested hierarchy of branching evolution, occurring over billions of years through entirely natural processes, is one of the biggest ideas ever to emerge from human science. It did not just emerge whole cloth from the brain of Charles Darwin, it had been percolating in the scientific community for decades. Darwin, however, put it all together in one long compelling argument. Alfred Wallace independently came up with essentially the same conclusion, although did not develop it as far as Darwin. The issue of genetically modified organisms is interesting from a science communication perspective because it is the one controversy that apparently most follows the old knowledge deficit paradigm. The question is – why do people reject science and accept pseudoscience. The knowledge deficit paradigm states that they reject science in proportion to their lack of knowledge about science, which should therefore be fixable through straight science education. Unfortunately, most pseudoscience and science denial does not follow this paradigm, and are due to other factors such as lack of critical thinking, ideology, tribalism, and conspiracy thinking. But opposition to GMOs does appear to largely result from a knowledge deficit.

The issue of genetically modified organisms is interesting from a science communication perspective because it is the one controversy that apparently most follows the old knowledge deficit paradigm. The question is – why do people reject science and accept pseudoscience. The knowledge deficit paradigm states that they reject science in proportion to their lack of knowledge about science, which should therefore be fixable through straight science education. Unfortunately, most pseudoscience and science denial does not follow this paradigm, and are due to other factors such as lack of critical thinking, ideology, tribalism, and conspiracy thinking. But opposition to GMOs does appear to largely result from a knowledge deficit.