Oct

08

2021

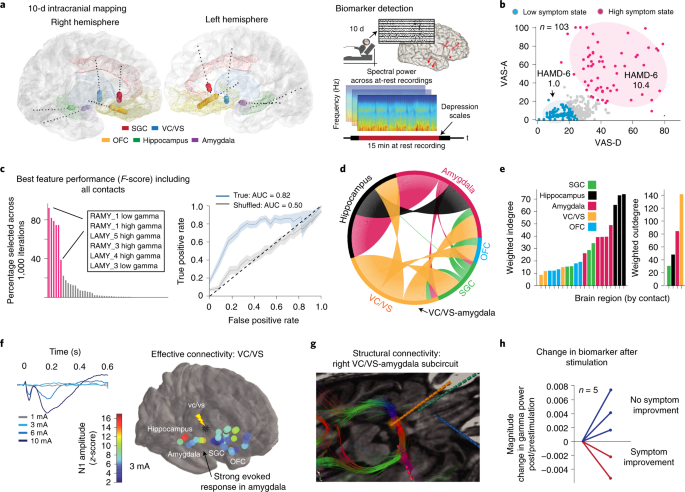

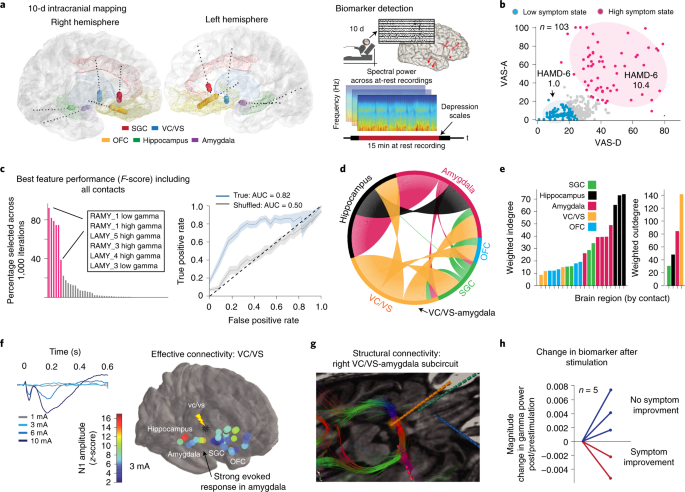

A new study published in Nature looks at a closed loop implanted deep brain stimulator to treat severe and treatment resistant depression, with very encouraging results. This is a report of a single patient, with is a useful proof of concept.

A new study published in Nature looks at a closed loop implanted deep brain stimulator to treat severe and treatment resistant depression, with very encouraging results. This is a report of a single patient, with is a useful proof of concept.

Severe depression can profoundly limit one’s life, and increase risk for suicide (affecting 300 million people worldwide and causing most of the 800,000 annual suicides). Depression, like many mental disorders, is very heterogenous, and is therefore not likely to be one specific disorder but a class of disorders with a variety of neurological causes. It also exists on a spectrum of severity, and it’s very likely that mild to moderate depression is phenomenologically different from severe depression. Severe depression can also be in some cases very treatment resistant, which simply means our current treatment options are probably not addressing the actual brain function that is causing the severe depression. We clearly need more options.

The pharmacological approach to severe depression has been very successful, but still not effective in all patients. For “major” depression, which is severe enough to impact a person’s daily life, pharmacological therapy and talk therapy (such as CBT – cognitive behaviorial therapy) seem to be equally effective. But again, these are statistical comparisons. Treatment needs to be individualized.

Continue Reading »

Oct

07

2021

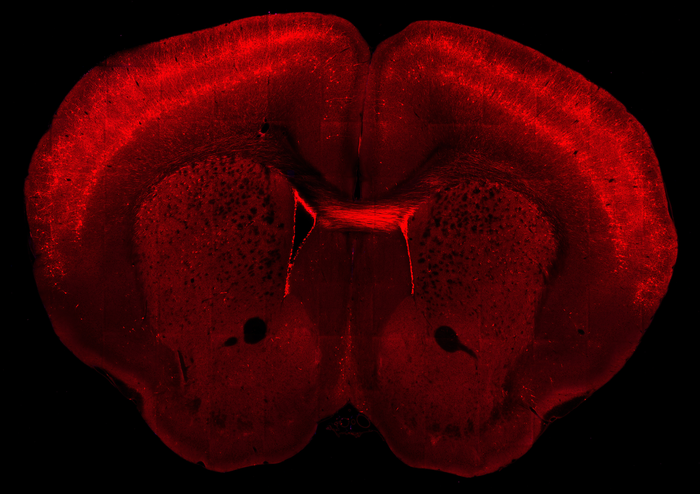

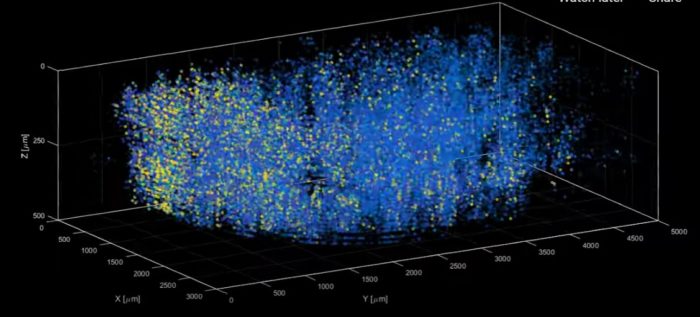

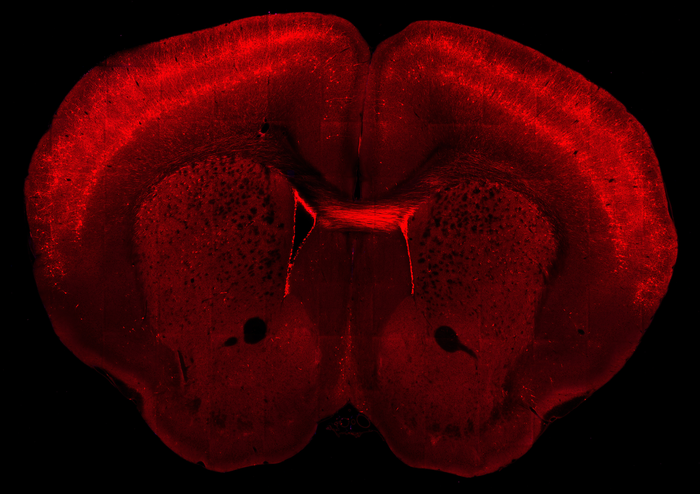

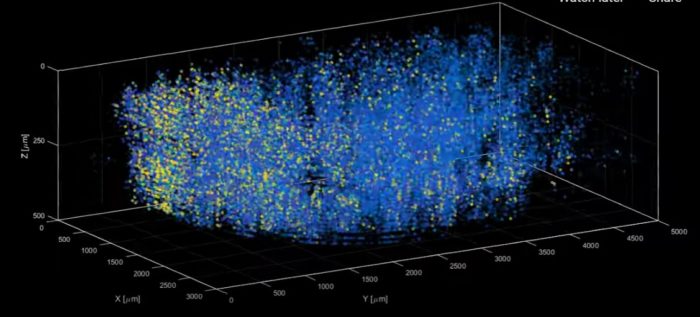

By now, especially if you are a regular reader here, you have probably heard of the connectome project, an attempt to entirely map the cells and connections of the human brain. This goal is actually comprised of multiple initiatives, one of which is the Brain Research Through Advancing Innovative Neurotechnologies (BRAIN) funded by the NIH. They have now published in Nature their first major result – a map of the mammalian primary motor cortex (technically a “multimodal cell census and atlas of the mammalian primary motor cortex”).

By now, especially if you are a regular reader here, you have probably heard of the connectome project, an attempt to entirely map the cells and connections of the human brain. This goal is actually comprised of multiple initiatives, one of which is the Brain Research Through Advancing Innovative Neurotechnologies (BRAIN) funded by the NIH. They have now published in Nature their first major result – a map of the mammalian primary motor cortex (technically a “multimodal cell census and atlas of the mammalian primary motor cortex”).

The goal of this initiative is to break the brain down into its constituent parts and then see how they all fit together. This begins with knowing all the different brain cell types, and this is part of the string of publications they have produced. The brain contains about 160 billion cells, with 87 billion neurons and the rest astrocytes (which provide supporting and modulating functions). There are many different kinds of neurons, with significant functional differences. Neurons differ in their structure and their chemistry.

The basic structure of a neuron is a cell body with dendrites (hair-like projections) for incoming signals and axons (longer projections) for outgoing signals. But the shape, number, and arrangement of dendrites and axons can vary considerably, and reflect their function, which relates to the pattern of connections the neuron makes. Neurons also differ in terms of their biochemistry – which neurotransmitters do they make, and which neurontransmitter receptors they have. Some neurotransmitters like glutamate are activating (make neurons fire faster) and others like GABA are inhibitory (make them fire slower or not at all).

Continue Reading »

Oct

05

2021

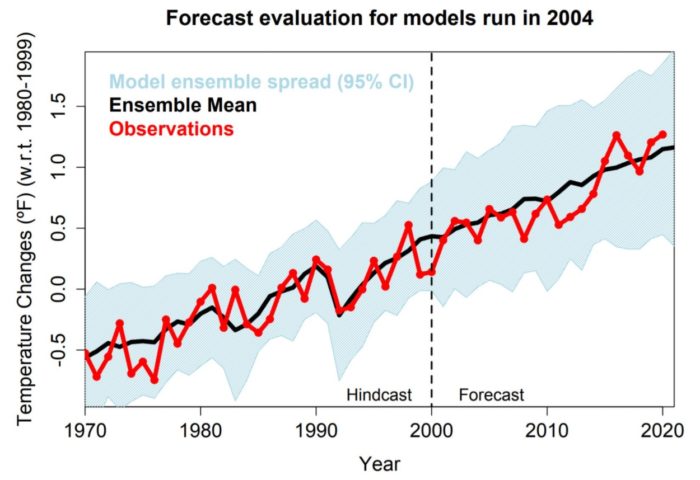

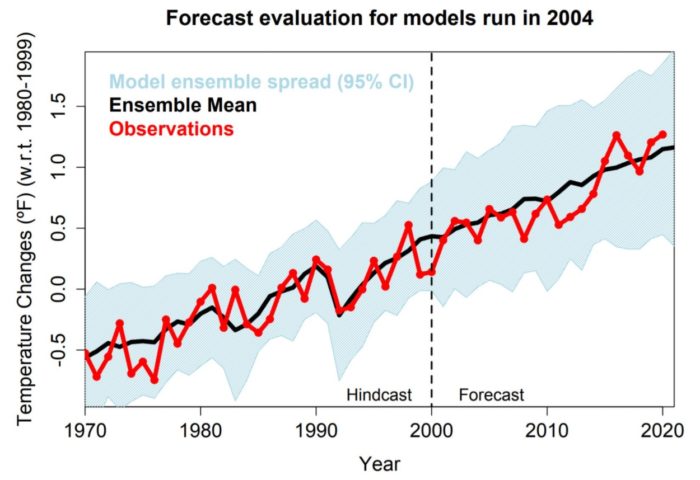

Syukuro Manabe, Klaus Hasselmann, and Giorgio Parisi share this year’s Nobel Prize in Physics for their work increasing our understanding of how complex systems work. This is a powerful tool for understanding the world, which reminds me of previous advances in our understanding of how gases behave.

Syukuro Manabe, Klaus Hasselmann, and Giorgio Parisi share this year’s Nobel Prize in Physics for their work increasing our understanding of how complex systems work. This is a powerful tool for understanding the world, which reminds me of previous advances in our understanding of how gases behave.

Gases are a phase of matter in which high energy particles are bouncing around at random. It would be impossible to predict the pathway of any individual gas molecule. However, collectively all of this random complexity follows very predictable laws. Similarly, weather is a very complex system. We can predict weather that is about to happen, but beyond a few days it becomes increasingly difficult. The system is simply too chaotic. However, climate (long term weather trends) follows theoretically predictable patterns. The trick is to see the hidden patterns in the chaos, and that is the work that these three physicists did.

Manabe and Hasselmann share half the prize for their work on climate models:

Syukuro Manabe demonstrated how increased levels of carbon dioxide in the atmosphere lead to increased temperatures at the surface of the Earth. In the 1960s, he led the development of physical models of the Earth’s climate and was the first person to explore the interaction between radiation balance and the vertical transport of air masses. His work laid the foundation for the development of current climate models.

About ten years later, Klaus Hasselmann created a model that links together weather and climate, thus answering the question of why climate models can be reliable despite weather being changeable and chaotic. He also developed methods for identifying specific signals, fingerprints, that both natural phenomena and human activities imprint in the climate. His methods have been used to prove that the increased temperature in the atmosphere is due to human emissions of carbon dioxide.

Continue Reading »

Oct

04

2021

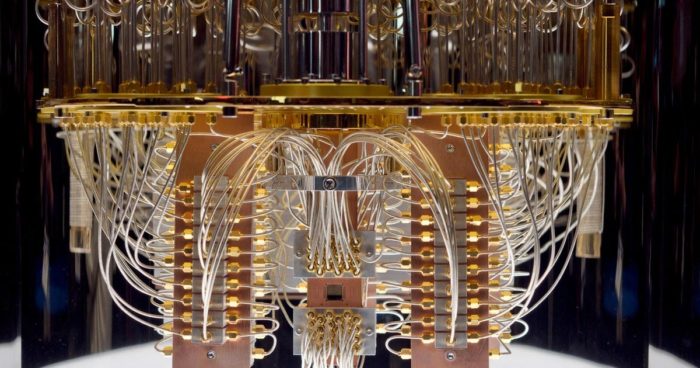

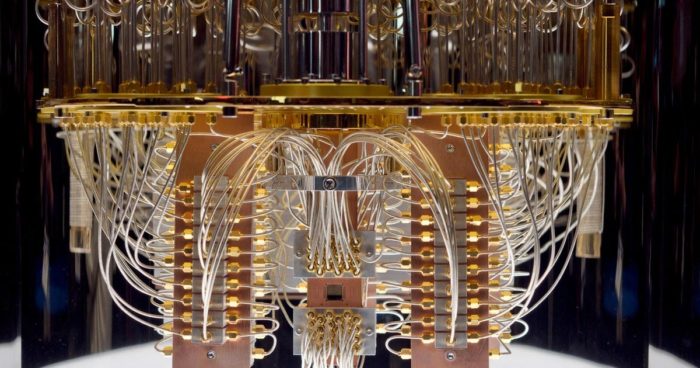

Quantum computing is an exciting technology with tremendous potential. However, at present that is exactly what it remains – a potential, without any current application. It’s actually a great example of the challenges of trying to predict the future. If quantum computing succeeds, the implications could be enormous. But at present, there is no guarantee that quantum computing will become a reality, and if so how long it will take. So if we try to imagine the world 50 or 100 years in the future, quantum computing is a huge variable we can’t really predict at this point.

Quantum computing is an exciting technology with tremendous potential. However, at present that is exactly what it remains – a potential, without any current application. It’s actually a great example of the challenges of trying to predict the future. If quantum computing succeeds, the implications could be enormous. But at present, there is no guarantee that quantum computing will become a reality, and if so how long it will take. So if we try to imagine the world 50 or 100 years in the future, quantum computing is a huge variable we can’t really predict at this point.

The technology is moving forward, but significant hurdles remain. I suspect that for the next 2-3 decades the “coming quantum computer revolution” will be similar to the “coming hydrogen economy,” in that it never came. But the technology continues to progress, and it might come yet.

What is quantum computing? Here is the quick version – a quantum computer exploits the weird properties of quantum mechanics to perform computing operations. Instead of classical “bits” where a unit of information is either a “1” or “0”, a quantum bit (or qubit) is in a state of quantum superposition, and can have any value between 0 and 1 inclusive. This means that each qubit contains a vastly greater amount of information than a classical bit, especially as they scale up in number. A theoretical quantum computer with one million qubits could perform operations in minutes that would take a universe full of classical supercomputers billions of years to perform (in other words, operations that are essentially impossible for classical computers). It’s no wonder that IBM, Google, China, and others are investing heavily in this technology.

But there are significant technological hurdles that remain. Quantum computer operations leverage quantum entanglement (where the physical properties of two particles are linked) among the qubits in order to get to the desired answer, but that answer is only probabilistic. In order to know that the quantum computer is working at all, researchers check the answers with a classical computer. Current quantum computers are running at about a 1% error rate. That sounds low, but for a computer it’s huge, essentially rendering the computer useless for any large calculations (the ones that quantum computers would be useful for).

Continue Reading »

Oct

01

2021

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

So it was reasonable, especially in the early days of the pandemic, to convert schooling to online classes until the pandemic was under control. The problem was – most schools were simply not ready for this transition. The worst problem were those student who did not have access to a computer and the internet from home. The pandemic helped expose and exacerbate the digital divide. But even for students with good access, the experience was generally not good. Many teachers were not prepared to adapt their classes for online learning. Many parents did not have ability to stay at home with their kids to monitor them. And many children were simply bored and not learning.

This is a classic infrastructure problem. Many technologies do not function well in a vacuum. You can’t have cars without roads, traffic control, licensing, safety regulations, and fueling stations. Mass online learning also requires significant infrastructure that we simply didn’t have.

Continue Reading »

Sep

30

2021

Last year YouTube (owned by Google) banned videos spreading misinformation about the COVID vaccines. The policy resulted in the removal of over 130,000 videos since last year. Now they announce that they are extending the ban to misinformation about any approved vaccine. The move is sure to provoke strong reactions and opinions on both sides, which I think is reasonable. The decision reflects a genuine dilemma of modern life with no perfect solution, and amounts to a “pick your poison” situation.

The case against big tech companies who control massive social media outlets essentially censoring certain kinds of content is probably obvious. That puts a lot of power into the hands of a few companies. It also goes against the principle of a free market place of ideas. In a free and open society people enjoy freedom of speech and the right to express their ideas, even (and especially) if they are unpopular. There is also a somewhat valid slippery slope argument to make – once the will and mechanisms are put into place to censor clearly bad content, mission creep can slowly impede on more and more opinions.

There is also, however, a strong case to be made for this kind of censorship. There has never been a time in our past when anyone could essentially claim the right to a massive megaphone capable of reaching most of the planet. Our approach to free speech may need to be tweaked to account for this new reality. Further, no one actually has the right to speech on any social media platform – these are not government sites nor are they owned by the public. They are private companies who have to the right to do anything they wish. The public, in turn, has the power to “vote with their dollars” and choose not to use or support any platform whose policies they don’t like. So the free market is still in operation.

Continue Reading »

Sep

28

2021

The current estimate is that the average human brain contains 86 billion neurons. These neurons connect to each other in a complex network, involving 100 trillion connections. The job of neuroscientists is to map all these connections and to see how they work – no small task. There are multiple ways to approach this task.

The current estimate is that the average human brain contains 86 billion neurons. These neurons connect to each other in a complex network, involving 100 trillion connections. The job of neuroscientists is to map all these connections and to see how they work – no small task. There are multiple ways to approach this task.

At first neuroscientists just looked at the brain and described its macroscopic (or “gross”) anatomical structures. We can see there are different lobes of the brain and major connecting cables. You can also slice up the brain and see its internal structure. When the microscope was developed we could then look at the microscopic structure of the brain, and by using different staining techniques we could visualize the branching structure of axons and dendrites (the parts of neurons that connect to other neurons), we could see that there were different kinds of neurons, various layers in the cortex, and lots of pathways and nodes.

But even when we had a detailed map of the neuroanatomy of the brain down to the microscopic level, we still needed to know how it all functioned. We needed to see neurons in action. (And further, there are lots of non-neuronal cells in the brain such as astrocytes that also affect brain function.) At first we were able to infer what different parts of the brain did by examining people who had damage to one part of the brain. Damage to the left temporal lobe in most people causes language deficits, so this part of the brain must be involved in language processing. We could also do research on animals for all but the highest brain functions.

Continue Reading »

Sep

27

2021

The story of exactly how and when people from other continents populated the Americas is still unfolding. Scientists have uncovered stunning new evidence – score of human footprints in New Mexico dating to 21-23 thousand years ago, 5-7- thousand years older than the previous oldest evidence.

The story of exactly how and when people from other continents populated the Americas is still unfolding. Scientists have uncovered stunning new evidence – score of human footprints in New Mexico dating to 21-23 thousand years ago, 5-7- thousand years older than the previous oldest evidence.

The evidence is pretty clear now that humans evolved in Africa and later spread throughout the world. We were not the first hominid species to leave Africa, that was Homo erectus, who spread to Europe and Asia about 1.8 million years ago. Meanwhile those that remained in Africa continued to evolve into other species, including Homo heidelbergensis, which is the current best candidate for the most recent common ancestor between modern humans and Neanderthals. Heidelbergensis also migrated out of Africa, and in Europe and Asia evolved into Neanderthals (who were well established by 400,000 years ago). Meanwhile their cousins back in the homeland evolved into modern humans.

Modern humans migrated out of Africa about 80,000 years ago, and spread throughout the world. Getting to Europe and Asia is easy, because they are all connected by land. Getting to the pacific islands was probably through a combination of land bridges during times of low ocean levels and traveling across water by some means, probably with short distance island hopping. The same is true of Australia, some combination of land bridges and island hopping.

Continue Reading »

Sep

23

2021

The robots are coming. Of course, they are already here, mostly in the manufacturing sector. Robots designed to function in the much softer and chaotic environment of a home, however, are still in their infancy (mainly toys and vacuum cleaners). Slowly but surely, however, robots are spreading out of the factory and into places where they interact with humans. As part of this process, researchers are studying how people socially react to robots, and how robot behavior can be tweaked to optimize this interaction.

The robots are coming. Of course, they are already here, mostly in the manufacturing sector. Robots designed to function in the much softer and chaotic environment of a home, however, are still in their infancy (mainly toys and vacuum cleaners). Slowly but surely, however, robots are spreading out of the factory and into places where they interact with humans. As part of this process, researchers are studying how people socially react to robots, and how robot behavior can be tweaked to optimize this interaction.

We know from prior research that people react to non-living things as if they are real people (technically, as if they have agency) if they act as if they have a mind of their own. Our brains sort the world into agents and objects, and this categorization seems to entirely depend on how something moves. Further, emotion can be conveyed with minimalistic cues. This is why cartoons work, or ventriloquist dummies.

A humanoid robot that can speak and has basic facial expressions, therefore, is way more than enough to trigger in our brains the sense that it is a person. The fact that it may be plastic, metal, and glass does not seem to matter. But still, intellectually, we know it is a robot. Let’s further assume for now we are talking about robots with narrow AI only, no general AI or self-awareness. Cognitively the robot is a computer and nothing more. We can now ask a long list of questions about how people will interact with such robots, and how to optimize their behavior for their function.

Continue Reading »

Sep

21

2021

Are you afraid of spiders? I mean, really afraid, to the point that you will alter your plans and your behavior in order to specifically reduce the chance of encountering one of these multi-legged creatures? Intense fears, or phobias, are fairly common, affecting from 3-15% of the population. The technical definition (from the DSM-V) of phobia contains a number of criteria, but basically it is a persistent fear or anxiety provoked by a specific object or situation that is persistent, unreasonable and debilitating. In order to be considered a disorder:

Are you afraid of spiders? I mean, really afraid, to the point that you will alter your plans and your behavior in order to specifically reduce the chance of encountering one of these multi-legged creatures? Intense fears, or phobias, are fairly common, affecting from 3-15% of the population. The technical definition (from the DSM-V) of phobia contains a number of criteria, but basically it is a persistent fear or anxiety provoked by a specific object or situation that is persistent, unreasonable and debilitating. In order to be considered a disorder:

“The fear, anxiety, or avoidance causes clinically significant distress or impairment in social, occupational, or other important areas of functioning.”

The most effective treatment for phobias is exposure therapy, which gradually exposes the person suffering from a phobia to the thing or situation which provokes fear and anxiety. This allows them to slowly build up a tolerance to the exposure (desensitization), to learn that their fears are unwarranted and to reduce their anxiety. Exposure therapy works, and reviews of the research show that it is effective and superior to other treatments, such as cognitive therapy alone.

But there can be practical limitations to exposure therapy. One of which is the inability to find an initial exposure scenario that the person suffering from a phobia will accept. For example, you may be so phobic of spiders that any exposure is unacceptable, and so there is no way to begin the process of exposure therapy. For these reasons there has been a great deal of interest in using virtual/augment reality for exposure therapy for phobia. A 2019 systematic review including nine studies found that VR exposure therapy was as effective as “in vivo” exposure therapy for agoraphobia (fearing situations like crowds that trigger panic) and specific phobias, but not quite as effective for social phobia.

Continue Reading »

A new study published in Nature looks at a closed loop implanted deep brain stimulator to treat severe and treatment resistant depression, with very encouraging results. This is a report of a single patient, with is a useful proof of concept.

A new study published in Nature looks at a closed loop implanted deep brain stimulator to treat severe and treatment resistant depression, with very encouraging results. This is a report of a single patient, with is a useful proof of concept.

By now, especially if you are a regular reader here, you have probably heard of the connectome project, an attempt to entirely map the cells and connections of the human brain. This goal is actually comprised of multiple initiatives, one of which is the Brain Research Through Advancing Innovative Neurotechnologies (BRAIN) funded by the NIH. They have now

By now, especially if you are a regular reader here, you have probably heard of the connectome project, an attempt to entirely map the cells and connections of the human brain. This goal is actually comprised of multiple initiatives, one of which is the Brain Research Through Advancing Innovative Neurotechnologies (BRAIN) funded by the NIH. They have now  Syukuro Manabe, Klaus Hasselmann, and Giorgio Parisi share

Syukuro Manabe, Klaus Hasselmann, and Giorgio Parisi share  Quantum computing is an exciting technology with tremendous potential. However, at present that is exactly what it remains – a potential, without any current application. It’s actually a great example of the challenges of trying to predict the future. If quantum computing succeeds, the implications could be enormous. But at present, there is no guarantee that quantum computing will become a reality, and if so how long it will take. So if we try to imagine the world 50 or 100 years in the future, quantum computing is a huge variable we can’t really predict at this point.

Quantum computing is an exciting technology with tremendous potential. However, at present that is exactly what it remains – a potential, without any current application. It’s actually a great example of the challenges of trying to predict the future. If quantum computing succeeds, the implications could be enormous. But at present, there is no guarantee that quantum computing will become a reality, and if so how long it will take. So if we try to imagine the world 50 or 100 years in the future, quantum computing is a huge variable we can’t really predict at this point. There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

There is pretty broad agreement that the pandemic was a net negative for learning among children. Schools are an obvious breeding ground for viruses, with hundreds or thousands of students crammed into the same building, moving to different groups in different classes, and with teachers being systematically exposed to many different students while they spray them with their possibly virus-laden droplets. Wearing masks, social distancing, and using plexiglass barriers reduces the spread, but not enough if we are in the middle of a pandemic surge. Only vaccines will make schools truly safe.

The current estimate is that the average human brain contains

The current estimate is that the average human brain contains  The story of exactly how and when people from other continents populated the Americas is still unfolding. Scientists have uncovered stunning new evidence – score of human footprints in New Mexico dating to 21-23 thousand years ago, 5-7- thousand years older than the previous oldest evidence.

The story of exactly how and when people from other continents populated the Americas is still unfolding. Scientists have uncovered stunning new evidence – score of human footprints in New Mexico dating to 21-23 thousand years ago, 5-7- thousand years older than the previous oldest evidence. The robots are coming. Of course, they are already here, mostly in the manufacturing sector. Robots designed to function in the much softer and chaotic environment of a home, however, are still in their infancy (mainly toys and vacuum cleaners). Slowly but surely, however, robots are spreading out of the factory and into places where they interact with humans. As part of this process, researchers are studying how people socially react to robots, and how robot behavior can be tweaked to optimize this interaction.

The robots are coming. Of course, they are already here, mostly in the manufacturing sector. Robots designed to function in the much softer and chaotic environment of a home, however, are still in their infancy (mainly toys and vacuum cleaners). Slowly but surely, however, robots are spreading out of the factory and into places where they interact with humans. As part of this process, researchers are studying how people socially react to robots, and how robot behavior can be tweaked to optimize this interaction. Are you afraid of spiders? I mean, really afraid, to the point that you will alter your plans and your behavior in order to specifically reduce the chance of encountering one of these multi-legged creatures? Intense fears, or phobias, are fairly common, affecting from 3-15% of the population. The technical definition (

Are you afraid of spiders? I mean, really afraid, to the point that you will alter your plans and your behavior in order to specifically reduce the chance of encountering one of these multi-legged creatures? Intense fears, or phobias, are fairly common, affecting from 3-15% of the population. The technical definition (