Jan 03 2017

More Evidence for Motivated Reasoning

A recent neuroscientific study looked at what happens in the brains of subjects when their beliefs were challenged. The study adds a new bit of evidence to our understanding of motivated reasoning.

A recent neuroscientific study looked at what happens in the brains of subjects when their beliefs were challenged. The study adds a new bit of evidence to our understanding of motivated reasoning.

Before we get to the details of the study, let’s review what we mean by motivated reasoning. Psychological studies have shown that people treat different beliefs differently. Specifically, there is one set of beliefs that are core to a person’s identity and to which they have an emotional attachment. We treat such beliefs differently than all other beliefs.

For most beliefs people actually are quite rational at baseline. We tend to follow a Bayesian approach, meaning that we update our beliefs as new information comes to our attention. If we are told that some historical fact is different than what we remember, we will quickly change our beliefs about that historical fact. Further, the more information we have about something, the more solid our belief is, the more slowly we will change that belief. We don’t just change from one thing to the next, we incorporate the new information with our old information.

This is actually a very scientific approach. I would not easily change my belief that the sun is at the center of our solar system. It would take a profound amount of very reliable information to counter all the solid scientific information on which my current belief is based. If, however, I was told from a reliable source something about George Washington I never heard before, I would accept it much more quickly. This is reasonable, and this is how most people function day-to-day.

The problem comes from our special set of beliefs in which we have an emotional investment. These are alleged facts or beliefs about the world that support our sense of identity or our ideology. We commonly call such beliefs “sacred cows.”

When those beliefs are challenged we don’t take a rational and detached approach. We dig in our heels, and engage in motivated reasoning. We defend the core beliefs at all costs, shredding logic, discarding inconvenient facts, making up facts as necessary, cherry pick only the facts we like, engage in magical thinking, and use subjective judgments as necessary without any consideration for internal consistency. We collectively refer to these processes as motivated reasoning, something at which people generally excel.

In general political opinions tend to fall into the “sacred cow” category. People tend to identify with their political tribe, and want to believe that their tribe is virtuous and smart, while the other tribe are mostly lying idiots. Of course, these dichotomies occur on a spectrum. You can have a little bit of an emotional attachment to a belief, or it can be fundamental to your world view and identity. You can be a little tribal in your political views, or hyperpartisan.

Unfortunately, what appears to have happened in the last 30 years is an overall increase in partisanship. This is extremely counterproductive to the functioning of our democracy.

The New Study

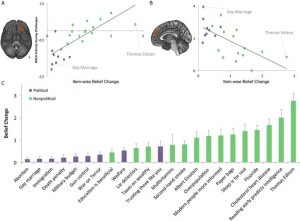

The recently published study does not really change anything, but it adds a bit of confirmation to the basic understanding I outlined above. This is an fMRI study of 40 liberal subjects. They were presented with statements that were either designed to be political or non-political. They were then confronted with counterclaims to contradict those facts, some of which were exaggerated or were untrue.

An example of a political claim is that the US spends too much of its resources on the military. The counterclaim is that Russia’s nuclear arsenal is twice the size of the US’s (which is not true – Russia has 7,300 warheads to our 7,100). An example of a non-political claim is “Thomas Edison invented the lightbulb.” One counter to that claim is that, “Nearly 70 years before Edison, Humphry Davy demonstrated an electric lamp to the Royal Society.” This is true, but an exaggeration in that Davy’s incandescent bulb was not practical. Edison’s was not the first light bulb, but it was the first one with the properties necessary to make its wide use feasible.

The researchers looked at the activity in the subject’s brains when they were confronted with a counter claim to a political vs non-political opinion. When a political belief was challenged, more of the brain lit up, including areas known as the “default mode network” and also the amygdala. The former may be involved in identity, and the latter in negative emotions.

It is always difficult to interpret such studies. First, 40 subjects is a small number, and fMRI’s involve a low signal to noise ratio. Assuming the results are valid, we also don’t know exactly what they mean. Just because we can see one part of the brain light up, that does not mean we know what it was doing. The same structure in the brain will participate in different overlapping networks with different overlapping functions.

It does seem clear, however, that the brain responds differently to political and non-political challenges, in a way that is suggestive of an emotional response.

The researchers also assessed the degree to which the subjects changed their minds on the facts that were challenged. They changed them more for non-political than for political views. Again, there are lots of variables here, and no one study is going to account for all of them.

Conclusion

This new study is consistent with prior research on the topic of motivated reasoning, and also points the way to further research. Follow up studies with conservatives, with other ideologies (religious, social, historical), addressing other variables more directly, like how truthful or plausible the counter claims are, and with larger numbers of subjects would all be helpful.

The study is another reminder, however, that humans are emotional creatures. I do think it would be helpful to make a specific effort to be more detached when it comes to ideological beliefs. Factual beliefs about the world should not be a source of identity, because those facts may be wrong, partly wrong, or incomplete.

This is easier said than done. My strategy has been to focus my emotional investment in being skeptical. Take pride in being detached when it comes to factual claims, in following a valid process of logic and empiricism, and on changing your mind when necessary. It is important to focus on the validity of the process, not on any particular claim or set of beliefs.

I also think we need to remind ourselves that people who disagree with us are just people. They are not demons. They have their reasons for believing what they do. They think they are right just as much as you think you are right. They don’t disagree with you because you are virtuous and they are evil. They just have a different narrative than you, and your narrative is likely just as subjective and flawed as theirs.