Jun 04 2020

fMRI Researcher Questions fMRI Research

This is an important and sobering study, that I fear will not get a lot of press attention – especially in the context of current events. It is a bit wonky, but this is exactly the level of knowledge one needs in order to be able to have any chance of consuming and putting into context scientific research.

This is an important and sobering study, that I fear will not get a lot of press attention – especially in the context of current events. It is a bit wonky, but this is exactly the level of knowledge one needs in order to be able to have any chance of consuming and putting into context scientific research.

I have discussed fMRI previously – it stands for functional magnetic resonance imaging. It uses MRI technology to image blood flow to different parts of the brain, and from that infer brain activity. It is used more in research than clinically, but it does have some clinical application – if, for example, we want to see how active a lesion in the brain is. In research it is used to help map the brain, to image how different parts of the brain network and function together. It is also used to see which part of the brain lights up when subjects engage in specific tasks. It is this last application of fMRI that was studied.

Professor Ahmad Hariri from Duke University just published a reanalysis of the last 15 years of his own research, calling into question its validity. Any time someone points out that an entire field of research might have some fatal problems, it is reason for concern. But I do have to point out the obvious silver lining here – this is the power of science, self-correction. This is a dramatic example, with a researcher questioning his own research, and not afraid to publish a study which might wipe out the last 15 years of his own research.

This realization does not come out of the blue. Neuroscientists have known since the beginning that this task-specific use of fMRI was tricky, and a lot of the published data is questionable. This is what motivated Hariri to look at his own research. In this paradigm a subject is put into an fMRI while they are asked to engage in a specific task. The goal of the study is to then correlate the task with the parts of the brain that light up. This is supposed to tell us what that part of the brain does.

Sounds simple, but the data has never been clean. The primary problem is that there is a lot of noise and variability. It is not as if the brain is mostly quiet, and then one or a few areas then clearly light up. Rather, the wakeful brain is constantly active causing different parts of the brain to light up all the time (this is the noise). This kind of research then has to use multiple subjects and multiple trials to average out the fMRI activity, in order to pull a signal out of this noise. One of the main criticisms of this technique, however, is that the signal to noise ratio is rather thin, which reduces confidence in the results.

Another source of noise is that we cannot control or independently verify what is happening in the minds of the subjects. They may be thinking about what they had for breakfast when they are supposed to be doing the task. So one way to determine how reliable the results are is to do a test-retest analysis. Researchers ask a subject to do a task while they take an fMRI image, then an hour later (or a day, or a month) they do it again. This exactly the reanalysis that Hariri did, he looked at the test-retest reliability of fMRI studies. He basically found that there isn’t any.

Hariri and his colleagues reexamined 56 published papers based on fMRI data to gauge their reliability across 90 experiments. Hariri said the researchers recognized that “the correlation between one scan and a second is not even fair, it’s poor.”

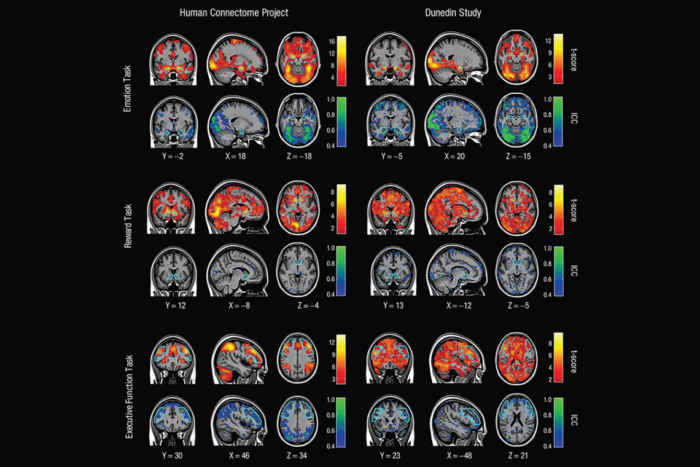

They also examined data from the brain-scanning Human Connectome Project — “Our field’s Bible at the moment,” Hariri called it — and looked at test/retest results for 45 individuals. For six out of seven measures of brain function, the correlation between tests taken about four months apart with the same person was weak. The seventh measure studied, language processing, was only a fair correlation, not good or excellent.

Finally they looked at data they collected through the Dunedin Multidisciplinary Health and Development Study in New Zealand, in which 20 individuals were put through task-based fMRI twice, two or three months apart. Again, they found poor correlation from one test to the next in an individual.

This seriously calls into question the validity of this entire approach to fMRI research. We will see what the research community has to say about it, and whether anything can be rescued from the existing research. It also remains to be seen what the remedy should be. Should neuroscientists abandon this technique, or find ways to refine it. For example, subjects could be kept in the fMRI for much longer to produce a more consistent image. Also, studies can do more test-retest analysis internally.

The real problem, however, may be the premises underlying this approach. I wrote about this 12 years ago, stating at the time that this was a major problem for this type of fMRI study. This gets to the “modules vs networks” debate among neuroscientists. Is the brain fundamentally organized as discrete modules that are responsible for specific kinds of processing, or is the main organization around networks across different parts of the brain. The answer, it seems, is yes. Both are true, there are networks of modules.

But what is the exact nature of these modules, which in essence is what fMRI is trying to image in this type of research – which “modules” light up? It seems that modules do not necessarily correlate with a specific ability or function, but rather, perhaps, a type of information processing that can serve multiple different functions. Modules may, therefore, serve different functions when they are part of different networks. Perhaps it is true that networks themselves are flexible, depending on exactly how a task is being completed. Different similar networks can do sort of the same thing, but in slightly different ways.

This variability is likely to be task specific, with some tasks (like language) being fairly consistent, while other more abstract tasks are highly variable. The bottom line is that brain function is incredibly complex, and it may be a level or two more complex than the research paradigms we are currently using to try to figure it out. In other words, the task-specific fMRI paradigm may be fatally flawed, not just technically limited. Time will tell.

Hariri also points out, however, that the networks approach to fMRI has much greater validity. This paradigm works – using fMRI to image network activity in the brain to see how parts of the brain are wired together. The relative success of this approach may be telling us something about the relative contribution of networks vs modules. But also the networks approach is just trying to figure out anatomy, how the brain is wired together, and is not task-specific. It is the task-specific part that seems to be the problem, likely for the reasons I state above.

This all may seem like a giant step backwards, but it’s all part of how science works. This is still information – knowing that brain module activity is variable from task execution to task execution is a useful insight. Negative results are still results. It may suck that it took 15 years to figure that out, but we are studying the most complex thing we know of in the universe, so no one expected this to be easy.