Feb

16

2026

It’s not easy being a futurist (which I guess I technically am, having written a book about the future of technology). It never was, judging by the predictions of past futurists, but it seems to be getting harder as the future is moving more and more quickly. Even if we don’t get to something like “The Singularity”, the pace of change in many areas of technology is speeding up. Actually it’s possible this may, paradoxically, be good for futurists. We get to see fairly quickly how wrong our predictions were, and so have a chance at making adjustments and learning from our mistakes.

It’s not easy being a futurist (which I guess I technically am, having written a book about the future of technology). It never was, judging by the predictions of past futurists, but it seems to be getting harder as the future is moving more and more quickly. Even if we don’t get to something like “The Singularity”, the pace of change in many areas of technology is speeding up. Actually it’s possible this may, paradoxically, be good for futurists. We get to see fairly quickly how wrong our predictions were, and so have a chance at making adjustments and learning from our mistakes.

We are now near the beginning of many transformative technologies – genetic engineering, artificial intelligence, nanotechnology, additive manufacturing, robotics, and brain-machine interface. Extrapolating these technologies into the future is challenging. How will they interact with each other? How will they be used and accepted? What limitations will we run into? And (the hardest question) what new technologies not on that list will disrupt the future of technology?

While we are dealing with these big question, let’s focus on one specific technology – controllable robotic prosthetics. I have been writing about this for years, and this is an area that is advancing more quickly than I had anticipated. The reason for this is, briefly, AI. Recent advances in AI are allowing for far better brain-machine interface control than previously achievable. Recent advances in AI allow for technology that is really good at picking out patterns from tons of noisy data. This includes picking out patterns in EEG signals from a noisy human brain.

This matters when the goal is having a robotic prosthetic limb controlled by the user through some sort of BMI (from nerves, muscles, or directly from the brain). There are always two components to this control – the software driving the robotic limb has to learn what the user wants, and the user has to learn how to control the limb. Traditionally this takes weeks to months of training, in order to achieve a moderate but usable degree of control. By adding AI to the computer-learning end of the equation, this training time is reduced to days, with far better results. This is what has accelerated progress by a couple of decades beyond where I thought it would be.

Continue Reading »

Feb

12

2026

There are many ways in which our brains can be hacked. It is a complex overlapping set of algorithms evolved to help us interact with our environment to enhance survival and reproduction. However, while we evolved in the natural world, we now live in a world of technology, which gives us the ability to control our environment. We no longer have to simply adapt to the environment, we can adapt the environment to us. This partly means that we can alter the environment to “hack” our adaptive algorithms. Now we have artificial intelligence (AI) that has become a very powerful tool to hack those brain pathways.

There are many ways in which our brains can be hacked. It is a complex overlapping set of algorithms evolved to help us interact with our environment to enhance survival and reproduction. However, while we evolved in the natural world, we now live in a world of technology, which gives us the ability to control our environment. We no longer have to simply adapt to the environment, we can adapt the environment to us. This partly means that we can alter the environment to “hack” our adaptive algorithms. Now we have artificial intelligence (AI) that has become a very powerful tool to hack those brain pathways.

In the last decade chatbots have blown past the Turing Test – which is a type of test in which a blinded evaluator has to tell the difference between a live person and an AI through conversation alone. We appear to still be on the steep part of the curve in terms of improvements in these large language model and other forms of AI. What these applications have gotten very good at is mimicking human speech – including pauses, inflections, sighing, “ums”, and all the other imperfections that make speech sound genuinely human.

As an aside, these advances have rendered many sci-fi vision of the future quaint and obsolete. In Star Trek, for example, even a couple hundred years in the future computers still sounded stilted and artificial. We could, however, retcon this choice to argue that the stilted computer voices of the sci-fi future were deliberate, and not a limitation of the technology. Why would they do this? Well…

Current AI is already so good at mimicking human speech, including the underlying human emotion, that people are forming emotional attachments to them, or being emotionally manipulated by them. People are, literally, falling in love with their chatbots. You might argue that they just “think” they are falling in love, or they are pretending to fall in love, but I see no reason not to take them at their word. I’m also not sure there is a meaningful difference between thinking one has fallen in love and actually falling in love – the same brain circuits, neurotransmitters, and feelings are involved.

Continue Reading »

Dec

29

2025

Definitely the most fascinating and perhaps controversial topic in neuroscience, and one of the most intense debates in all of science, is the ultimate nature of consciousness. What is consciousness, specifically, and what brain functions are responsible for it? Does consciousness require biology, and if not what is the path to artificial consciousness? This is a debate that possibly cannot be fully resolved through empirical science alone (for reasons I have stated and will repeat here shortly). We also need philosophy, and an intense collaboration between philosophy and neuroscience, informing each other and building on each other.

Definitely the most fascinating and perhaps controversial topic in neuroscience, and one of the most intense debates in all of science, is the ultimate nature of consciousness. What is consciousness, specifically, and what brain functions are responsible for it? Does consciousness require biology, and if not what is the path to artificial consciousness? This is a debate that possibly cannot be fully resolved through empirical science alone (for reasons I have stated and will repeat here shortly). We also need philosophy, and an intense collaboration between philosophy and neuroscience, informing each other and building on each other.

A new paper hopes to push this discussion further – On biological and artificial consciousness: A case for biological computationalism. Before we delve into the paper, let’s set the stage a little bit. By consciousness we mean not only the state of being wakeful and conscious, but the subjective experience of our own existence and at least a portion of our cognitive state and function. We think, we feel things, we make decisions, and we experience our sensory inputs. This itself provokes many deep questions, the first of which is – why? Why do we experience our own existence? Philosopher David Chalmers asked an extremely provocative question – could a creature have evolved that is capable of all of the cognitive functions humans have but not experience their own existence (a creature he termed a philosophical zombie, or p-zombie)?

Part of the problem of this question is that – how could we know if an entity was experiencing its own existence? If a p-zombie could exist, then any artificial intelligence (AI), even one capable of duplicating human-level intelligence, could be a p-zombie. If so, what is different between the AI and biological consciousness? At this point we can only ask these questions, some of them may need to wait until we actually develop human-level AI.

Continue Reading »

Dec

01

2025

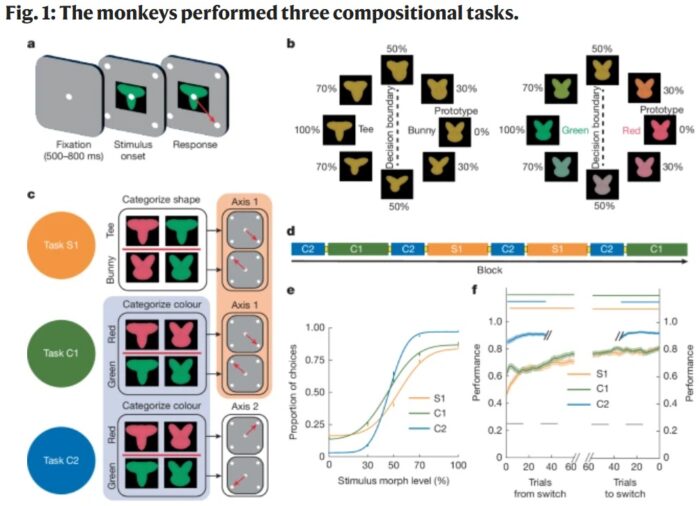

We have all likely had the experience that when we learn a task it becomes easier to learn a distinct but related task. Learning to cook one dish makes it easier to learn other dishes. Learning how to repair a radio helps you learn to repair other electronics. Even more abstractly – when you learn anything it can improve your ability to learn in general. This is partly because primate brains are very flexible – we can repurpose knowledge and skills to other areas. This is related to the fact that we are good at finding patterns and connections among disparate items. Language is also a good example of this – puns or witty linguistic humor is often based on making a connection between words in different contexts (I tried to tell a joke about chemistry, but there was no reaction).

We have all likely had the experience that when we learn a task it becomes easier to learn a distinct but related task. Learning to cook one dish makes it easier to learn other dishes. Learning how to repair a radio helps you learn to repair other electronics. Even more abstractly – when you learn anything it can improve your ability to learn in general. This is partly because primate brains are very flexible – we can repurpose knowledge and skills to other areas. This is related to the fact that we are good at finding patterns and connections among disparate items. Language is also a good example of this – puns or witty linguistic humor is often based on making a connection between words in different contexts (I tried to tell a joke about chemistry, but there was no reaction).

Neuroscientists are always trying to understand what we call the “neuroanatomical correlates” of cognitive function – what part of the brain is responsible for specific tasks and abilities? There is no specific one-to-one correlation. I think the best current summary of how the brain is organized is that it is made of networks of modules. Modules are nodes in the brain that do specific processing, but they participate in multiple different networks or circuits, and may even have different functions in different networks. Networks can also be more or less widely distributed, with the higher cognitive functions tending to be more complex than specific simple tasks.

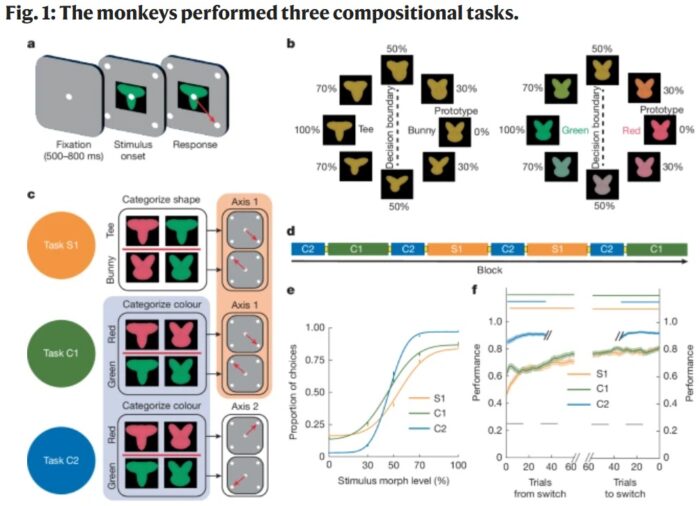

What, then, is happening in the brain when we exhibit this cognitive flexibility, repurposing elements of one learned task to help learn a new task? To address this question Princeton researchers looked at rhesus macaques. Specifically they wanted to know if primates engage in what is called “compositionality” – breaking down a task into specific components that can then be combined to perform the task. Those components can then be combined in new arrangements to compose a new task, like building with legos.

Continue Reading »

Nov

17

2025

I am currently in Dubai at the Future Forum conference, and later today I am on a panel about the future of the mind with two other neuroscientists. I expect the conversation to be dynamic, but here is the core of what I want to say.

I am currently in Dubai at the Future Forum conference, and later today I am on a panel about the future of the mind with two other neuroscientists. I expect the conversation to be dynamic, but here is the core of what I want to say.

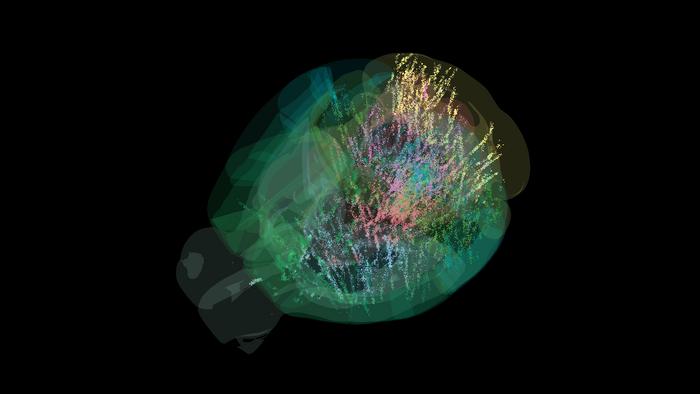

As I have been covering here over the years in bits and pieces, there seems to be several technologies converging on at least one critical component of research into consciousness and sentience. The first is the ability to image the functioning of the brain, in addition to the anatomy, in real time. We have functional MRI scanning, PET, and EEG mapping which enable us to see cerebral blood flow, metabolism and electrical activity. This allows researchers to ask questions such as: what parts of the brain light up when a subject is experiencing something or performing a specific task. The data is relatively low resolution (compared to the neuronal level of activity) and noisy, but we can pull meaningful patterns from this data to build our models of how the brain works.

The second technology which is having a significant impact on neuroscience research is computer technology, including but not limited to AI. All the technologies I listed above are dependent on computing, and as the software improves, so does the resulting imaging. AI is now also helping us make sense of the noisy data. But the computing technology flows in the other direction as well – we can use our knowledge of the brain to help us design computer circuits, whether in neural networks or even just virtually in software. This creates a feedback loop whereby we use computers to understand the brain, and the resulting neuroscience to build better computers.

Continue Reading »

Oct

06

2025

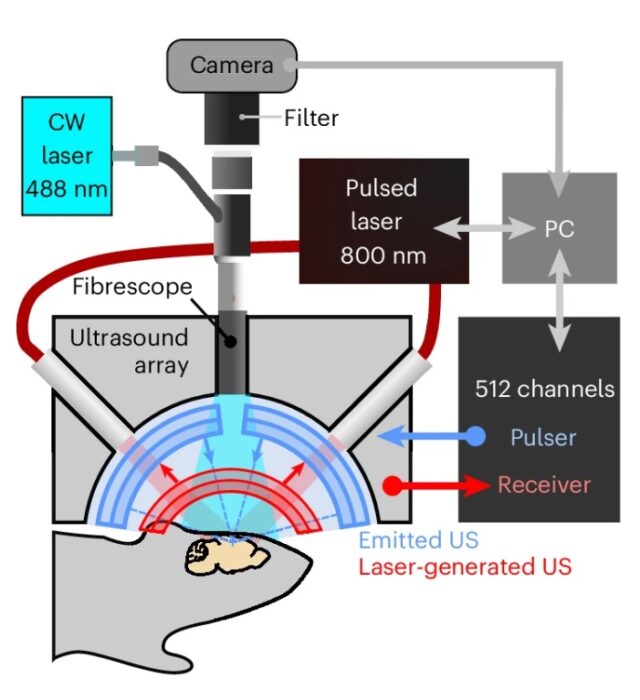

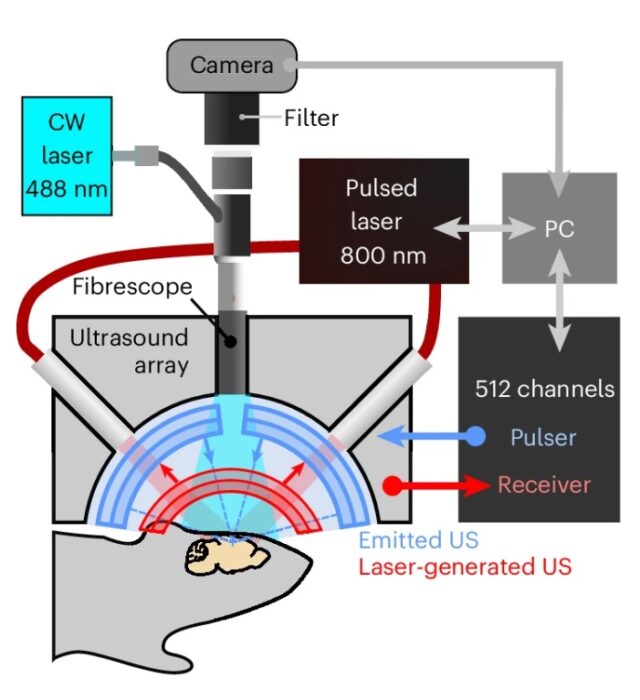

The technique is called holographic transcranial ultrasound neuromodulation – which sounds like a mouthful but just means using multiple sound waves in the ultrasonic frequency to affect brain function. Most people know about ultrasound as an imaging technique, used, for example, to image fetuses while still in the womb. But ultrasound has other applications as well.

The technique is called holographic transcranial ultrasound neuromodulation – which sounds like a mouthful but just means using multiple sound waves in the ultrasonic frequency to affect brain function. Most people know about ultrasound as an imaging technique, used, for example, to image fetuses while still in the womb. But ultrasound has other applications as well.

Sound wave are just another form of directed energy, and that energy can be used not only to image things but to affect them. In higher intensity they can heat tissue and break up objects through vibration. Ultrasound has been approved to treat tumor by heating and killing them, or to break up kidney stones. Ultrasound can also affect brain function, but this has proven very challenging.

The problem with ultrasonic neuromodulation is that low intensity waves have no effect, while high intensity waves cause tissue damage through heating. There does not appear to be a window where brain function can be safely modulated. However, a new study may change that.

The researchers are developing what they call holographic ultrasound neuromodulation – they use many simultaneous ultrasound origin points that cause areas of constructive and destructive interference in the brain, which means there will be locations where the intensity of the ultrasound will be much higher. The goal is to activate or inhibit many different points in a brain network simultaneously. By doing this they hope to affect the activity of the network as a whole at low enough intensity to be safe for the brain.

Continue Reading »

Sep

29

2025

We are all familiar with the notion of “being on autopilot” – the tendency to initiate and even execute behaviors out of pure habit rather than conscious decision-making. When I shower in the morning I go through roughly the identical sequence of behaviors, while my mind is mostly elsewhere. If I am driving to a familiar location the word “autopilot” seems especially apt, as I can execute the drive with little thought. Of course, sometimes this leads me to taking my most common route by habit even when I intend to go somewhere else. You can, of course, override the habit through conscious effort.

We are all familiar with the notion of “being on autopilot” – the tendency to initiate and even execute behaviors out of pure habit rather than conscious decision-making. When I shower in the morning I go through roughly the identical sequence of behaviors, while my mind is mostly elsewhere. If I am driving to a familiar location the word “autopilot” seems especially apt, as I can execute the drive with little thought. Of course, sometimes this leads me to taking my most common route by habit even when I intend to go somewhere else. You can, of course, override the habit through conscious effort.

That last word – effort – is likely key. Psychologists have found that humans have a tendency to maximize efficiency, which is another way of saying that we prioritize laziness. Being lazy sounds like a vice, but evolutionarily it probably is about not wasting energy. Animals, for example, tend to be active only as much as is absolutely necessary for survival, but we tend to see their laziness as conserving precious energy.

We developed for conservation of mental energy as well. We are not using all of our conscious thought and attention to do everyday activities, like walking. Some activities (breathing-walking) are so critical that there are specialized circuits in the brain for executing them. Other activities are voluntary or situation, like shooting baskets, but may still be important to us, so there is a neurological mechanism for learning these behaviors. The more we do them, the more subconscious and automatic they become. Sometimes we call this “muscle memory” but it’s really mostly in the brain, particularly the cerebellum. This is critical for mental efficiency. It also allows us to do one common task that we have “automated” while using our conscious brain power to do something else more important.

Continue Reading »

Sep

04

2025

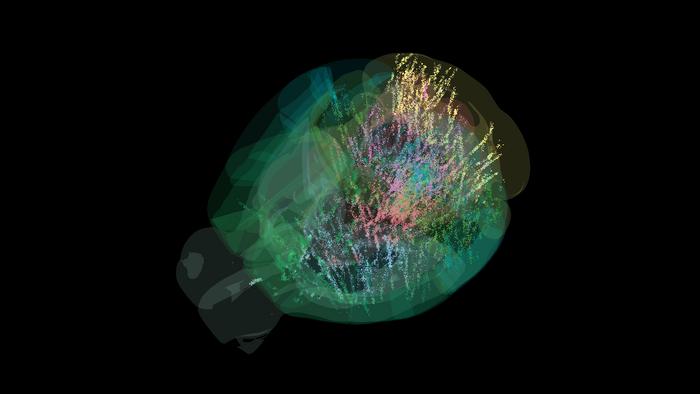

Researchers have just presented the results of a collaboration among 22 neuroscience labs mapping the activity of the mouse brain down to the individual cell. The goal was to see brain activity during decision-making. Here is a summary of their findings:

Researchers have just presented the results of a collaboration among 22 neuroscience labs mapping the activity of the mouse brain down to the individual cell. The goal was to see brain activity during decision-making. Here is a summary of their findings:

“Representations of visual stimuli transiently appeared in classical visual areas after stimulus onset and then spread to ramp-like activity in a collection of midbrain and hindbrain regions that also encoded choices. Neural responses correlated with impending motor action almost everywhere in the brain. Responses to reward delivery and consumption were also widespread. This publicly available dataset represents a resource for understanding how computations distributed across and within brain areas drive behaviour.”

Essentially, activity in the brain correlating with a specific decision-making task was more widely distributed in the mouse brain than they had previously suspected. But more specifically, the key question is – how does such widely distributed brain activity lead to coherent behavior. The entire set of data is now publicly available, so other researchers can access it to ask further research questions. Here is the specific behavior they studied:

“Mice sat in front of a screen that intermittently displayed a black-and-white striped circle for a brief amount of time on either the left or right side. A mouse could earn a sip of sugar water if they quickly moved the circle toward the center of the screen by operating a tiny steering wheel in the same direction, often doing so within one second.”

Further, the mice learned the task, and were able to guess which side they needed to steer towards even when the circle was very dim based on their past experience. This enabled the researchers to study anticipation and planning. They were also able to vary specific task details to see how the change affected brain function. Any they recorded the activity of single neurons to see how their activity was predicted by the specific tasks.

Continue Reading »

Aug

12

2025

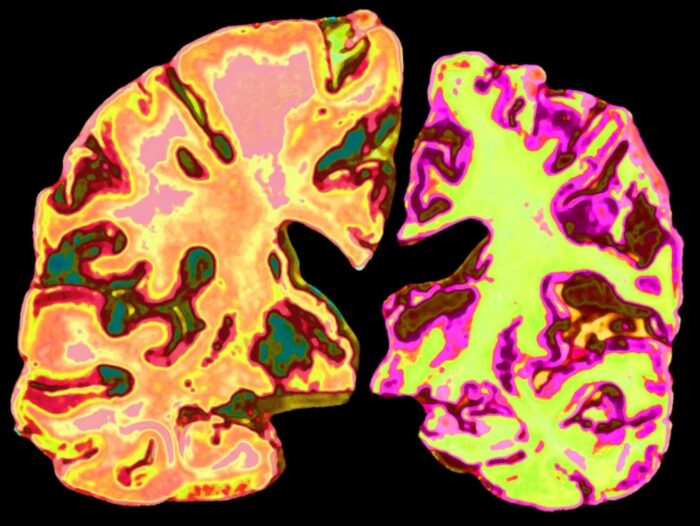

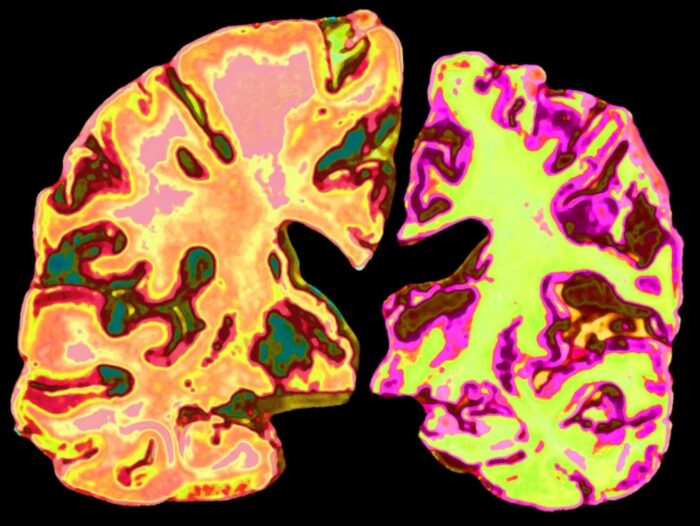

This is an interesting story, and I am trying to moderate my optimism. Alzheimer’s disease (AD), the major cause of dementia in humans, is a very complex disease. We have been studying it for decades, revealing numerous clues as to what kicks it off, what causes it to progress, and how to potentially treat it. This has lead to a lot of knowledge about the disease, but only recently resulted an effective disease-modifying treatments – the anti-amyloid treatments.

This is an interesting story, and I am trying to moderate my optimism. Alzheimer’s disease (AD), the major cause of dementia in humans, is a very complex disease. We have been studying it for decades, revealing numerous clues as to what kicks it off, what causes it to progress, and how to potentially treat it. This has lead to a lot of knowledge about the disease, but only recently resulted an effective disease-modifying treatments – the anti-amyloid treatments.

Another line of investigation has focused on lithium – that’s right, the element, and a major component of EV batteries. Lithium has long been recognized as a treatment for various mood and mental disorders, and was approved in 1970 in the US for the treatment of bipolar disorder. That is what I learned about it in medical school – it was the standard of care for BD but no one really new how it worked. It was the only “drug” that was just an element. It had a certain mystery to it, but it clearly was effective.

Now lithium is emerging as a potential treatment for AD. A recent study of lithium in mice has brought the treatment into the mainstream press, so it’s worth a review. Because of lithium’s benefit for BD it was studied for its effects on the brain. But also, it was observed that patients getting lithium treatment for BD had a lower risk of AD. Other observational data, such as levels of lithium in drinking water, also appeared to have this protective association. This kind of observational data is never definitive, but it was interesting enough to warrant further research.

Continue Reading »

Jun

12

2025

The human brain is extremely good at problem-solving, at least relatively speaking. Cognitive scientists have been exploring how, exactly, people approach and solve problems – what cognitive strategies do we use, and how optimal are they. A recent study extends this research and includes a comparison of human problem-solving to machine learning. Would an AI, which can find an optimal strategy, follow the same path as human solvers?

The study was specifically designed to look at two specific cognitive strategies, hierarchical thinking and counterfactual thinking. In order to do this they needed a problem that was complex enough to force people to use these strategies, but not so complex that it could not be quantified by the researchers. They developed a system by which a ball may take one of four paths, at random, through a maze. The ball is hidden from view to the subject, but there are auditory clues as to the path the ball is taking. The clues are not definitive so the subject has to gather information to build a prediction of the ball’s path.

What the researchers found is that subjects generally started with a hierarchical approach – this means they broke the problem down into simpler parts, such as which way the ball went at each decision point. Hierarchical reasoning is a general cognitive strategy we employ in many contexts. We do this whenever we break a problem down into smaller manageable components. This term more specifically refers to reasoning that starts with the general and then progressively hones in on the more detailed. So far, no surprise – subjects broke the complex problem of calculating the ball’s path into bite-sized pieces.

What happens, however, when their predictions go awry? They thought the ball was taking one path but then a new clue suggests is has been taking another. That is where they switch to counterfactual reasoning. This type of reasoning involves considering the alternative, in this case, what other path might be compatible with the evidence the subject has gathered so far. We engage in counterfactual reasoning whenever we consider other possibilities, which forces us to reinterpret our evidence and make new hypotheses. This is what subjects did, h0wever they did not do it every time. In order to engage in counterfactual reasoning in this task the subjects had to accurately remember the previous clues. If they thought they did have a good memory for prior clues, they shifted to counterfactual reasoning. If they did not trust their memory, then they didn’t.

Continue Reading »

It’s not easy being a futurist (which I guess I technically am, having written a book about the future of technology). It never was, judging by the predictions of past futurists, but it seems to be getting harder as the future is moving more and more quickly. Even if we don’t get to something like “The Singularity”, the pace of change in many areas of technology is speeding up. Actually it’s possible this may, paradoxically, be good for futurists. We get to see fairly quickly how wrong our predictions were, and so have a chance at making adjustments and learning from our mistakes.

It’s not easy being a futurist (which I guess I technically am, having written a book about the future of technology). It never was, judging by the predictions of past futurists, but it seems to be getting harder as the future is moving more and more quickly. Even if we don’t get to something like “The Singularity”, the pace of change in many areas of technology is speeding up. Actually it’s possible this may, paradoxically, be good for futurists. We get to see fairly quickly how wrong our predictions were, and so have a chance at making adjustments and learning from our mistakes.

There are many ways in which our brains can be hacked. It is a complex overlapping set of algorithms evolved to help us interact with our environment to enhance survival and reproduction. However, while we evolved in the natural world, we now live in a world of technology, which gives us the ability to control our environment. We no longer have to simply adapt to the environment, we can adapt the environment to us. This partly means that we can alter the environment to “hack” our adaptive algorithms. Now we have artificial intelligence (AI) that has become a very powerful tool to hack those brain pathways.

There are many ways in which our brains can be hacked. It is a complex overlapping set of algorithms evolved to help us interact with our environment to enhance survival and reproduction. However, while we evolved in the natural world, we now live in a world of technology, which gives us the ability to control our environment. We no longer have to simply adapt to the environment, we can adapt the environment to us. This partly means that we can alter the environment to “hack” our adaptive algorithms. Now we have artificial intelligence (AI) that has become a very powerful tool to hack those brain pathways. Definitely the most fascinating and perhaps controversial topic in neuroscience, and one of the most intense debates in all of science, is

Definitely the most fascinating and perhaps controversial topic in neuroscience, and one of the most intense debates in all of science, is  We have all likely had the experience that when we learn a task it becomes easier to learn a distinct but related task. Learning to cook one dish makes it easier to learn other dishes. Learning how to repair a radio helps you learn to repair other electronics. Even more abstractly – when you learn anything it can improve your ability to learn in general. This is partly because primate brains are very flexible – we can repurpose knowledge and skills to other areas. This is related to the fact that we are good at finding patterns and connections among disparate items. Language is also a good example of this – puns or witty linguistic humor is often based on making a connection between words in different contexts (I tried to tell a joke about chemistry, but there was no reaction).

We have all likely had the experience that when we learn a task it becomes easier to learn a distinct but related task. Learning to cook one dish makes it easier to learn other dishes. Learning how to repair a radio helps you learn to repair other electronics. Even more abstractly – when you learn anything it can improve your ability to learn in general. This is partly because primate brains are very flexible – we can repurpose knowledge and skills to other areas. This is related to the fact that we are good at finding patterns and connections among disparate items. Language is also a good example of this – puns or witty linguistic humor is often based on making a connection between words in different contexts (I tried to tell a joke about chemistry, but there was no reaction). I am currently in Dubai at the Future Forum conference, and later today I am on a panel about the future of the mind with two other neuroscientists. I expect the conversation to be dynamic, but here is the core of what I want to say.

I am currently in Dubai at the Future Forum conference, and later today I am on a panel about the future of the mind with two other neuroscientists. I expect the conversation to be dynamic, but here is the core of what I want to say. The technique is called

The technique is called  We are all familiar with the notion of “being on autopilot” – the tendency to initiate and even execute behaviors out of pure habit rather than conscious decision-making. When I shower in the morning I go through roughly the identical sequence of behaviors, while my mind is mostly elsewhere. If I am driving to a familiar location the word “autopilot” seems especially apt, as I can execute the drive with little thought. Of course, sometimes this leads me to taking my most common route by habit even when I intend to go somewhere else. You can, of course, override the habit through conscious effort.

We are all familiar with the notion of “being on autopilot” – the tendency to initiate and even execute behaviors out of pure habit rather than conscious decision-making. When I shower in the morning I go through roughly the identical sequence of behaviors, while my mind is mostly elsewhere. If I am driving to a familiar location the word “autopilot” seems especially apt, as I can execute the drive with little thought. Of course, sometimes this leads me to taking my most common route by habit even when I intend to go somewhere else. You can, of course, override the habit through conscious effort. Researchers

Researchers  This is an interesting story, and I am trying to moderate my optimism. Alzheimer’s disease (AD), the major cause of dementia in humans, is a very complex disease. We have been studying it for decades, revealing numerous clues as to what kicks it off, what causes it to progress, and how to potentially treat it. This has lead to a lot of knowledge about the disease, but only recently resulted an effective disease-modifying treatments – the anti-amyloid treatments.

This is an interesting story, and I am trying to moderate my optimism. Alzheimer’s disease (AD), the major cause of dementia in humans, is a very complex disease. We have been studying it for decades, revealing numerous clues as to what kicks it off, what causes it to progress, and how to potentially treat it. This has lead to a lot of knowledge about the disease, but only recently resulted an effective disease-modifying treatments – the anti-amyloid treatments.