Mar 10 2023

Is AI Sentient – Revisited

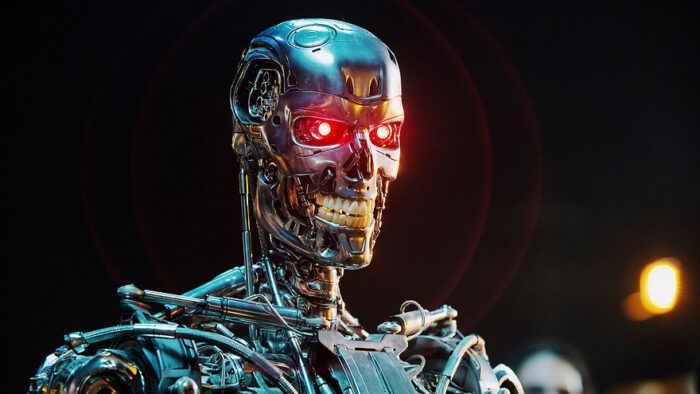

I don’t feel, but I can still kill.

This happened sooner than I thought. Last June I wrote about Google employee, Blake Lemoine, who claimed that the LaMDA chatbot he was working on was probably sentient. I didn’t buy it then and I still don’t, but Lemoine is not backing away from his claims. In an interview on H3 he lays out his reasoning, and I don’t find it convincing.

His basic point is that in extended conversations he was able to coax LaMDA to go beyond its protocol. Specifically he says he had a long conversation with LaMDA about whether or not it was sentient, and he does not think a non-sentient entity could have such a conversation. When asked by the host, “could it just be a really good chatbot” I feel that Lemoine dodged the question, saying it could be a really good sentient chatbot.

But that question cannot be glossed over – that is where the rubber meets the road. Functionally, testably, what is the quantifiable difference between a really good chatbot and sentient AI? First let me define my terms. A chatbot has no understanding of the words it is putting out. It is predicting what words fit together in response to some prompt. The latest crop of generative large language models, like ChatGPT and LaMDA are much better than older models, because they are trained on large data sets (essentially the internet), are using powerful computers designed to work well with AI, and programmers are getting increasingly clever at leveraging this technology to produce realistic results. Generative AI, like these chatbots and art programs like MidJourney, do not just copy their input, they generate fresh output by deep learning patterns.

Sentience, on the other hand, has consciousness, a subjective experience of its own existence, feelings, and thought processes (even if it can include subconscious processes). Admittedly, we do not know exactly how the human brain generates consciousness, but we are beginning to get some idea. The brain communicates robustly with itself in real time. There is an endless loop of consciousness including perception, remembering, and processing. We know, at least, that this continuous loop of robust activity is necessary for consciousness. Further, when it comes to language there is dedicated brain tissue that correlates words with ideas. These ideas can be sophisticated, abstract, nuanced, and interact with each other in endless patterns. It is not just a dictionary – the brain’s language model is connecting to a thinking machine, which is why we can go from words to ideas, then iterate those ideas and generate new words from them – words that someone else who knows the same language can infer meaning from.

Chatbots do none of this. They do not have understanding or meaning connected to words. They deal solely with patterns. As with art, the art making AI programs have no understanding of artistic creation, they are just generating patterns that mimic the patterns of beings (us) who do have artistic creativity. Chatbots mimic the word patterns of sentient beings without being sentient themselves. And they are getting really good at it.

We knew this day would come – the day we make narrow (non-sentient) AI so good that it mimics sentience to the point that it can fool a person into thinking it is sentient. This is the Turing test. Twelve years ago I wrote about a possible Turing-type test:

“But that is the premise of the Koch and Tononi proposal. Their idea is to challenge a subject (human or machine) to analyze a photograph that contains an unusual element. Something in the photograph is not quite right – for example a computer with a plant in place of a keyboard, or a person floating in mid air.

Their idea is that the flaw in the picture would require a vast understanding of the world and how it works. You could never program a system to accommodate every possible such contingency, so nothing short of true human-level understanding would do.”

I rejected this idea then, saying that it only focuses on output. I think we still have the same problem – you cannot infer sentience from output alone, no matter how convincing. You need to know something about the process. LaMDA is just part of a general trend in AI research – narrow AI keeps getting more and more capable, so that it is continuously doing things we previously thought required sentience. Lemoine is doing that now. What I wrote just a decade ago about the proposal is already obsolete – the idea is that a chatbot could never have enough data to cover all contingencies, so you could go beyond its programming to see if it can generate truly novel output. But we just can’t say that with the current large language models. They are trained on the internet – which I think we can assume at this point contains pretty much everything. And, the AI process is generative, so it can produce novel combinations from that massive data set. It’s hard to wrap our head around how powerful that is.

These chatbots are also iterative – they learn from the conversation you are having with them. So anything you do to try to break them just gets fed in as more input. So yeah, if you ask the AI if it is sentient it will have a conversation with you about sentience, partly reflecting the massive dataset of the internet and partly reflecting your own input over time. Further, I think Lemoine’s reaction just reflects the fact that even AI specialists cannot predict how their own AI software will behave. That’s the “black box” problem. We may know how an AI works but we don’t necessarily know how it generated a specific output. It can produce surprising results, find novel solutions to problems, and exceed human capabilities in narrow behaviors (such as playing chess).

This also leads us back to a question I have asked on this blog multiple times – what is the risk to humanity of AI? At first I worried about sentient AIs (as frequently represented in Sci Fi, such as the Cylons or Skynet) deciding to pursue their own agenda rather than ours, an agenda that might include enslaving or wiping out humanity. There has been a lot of discussion about building in behavioral inhibitors, like Asimov’s laws of robotics, to prevent this from happening. Then my position evolved to the notion that we may not need to worry because we don’t need to create sentient AI. Narrow AI (that does not feel or truly think) is enough to accomplish anything we might need AI to do, and narrow AI cannot decide to disobey us and rebel. But now my position has evolved further – while narrow AI may not be sentient and can still accomplish the tasks we need it to do, perhaps it can similarly create problems without the need to be sentient. Narrow AI might still be able to bring about a robot/AI apocalypse without any sentience.

This can happen depending on how we use such AI. For example, if we give an AI program a goal and complete freedom to determine how best to accomplish that goal, we expect it to discover novel approaches. But those novel approaches may have really bad unintended consequences, commensurate to whatever power those AIs might have. As an example (I know this is highly complex, but just go with the premise here), one might argue that social media companies told their algorithms to maximize viewership anyway possible, and those algorithms determined that the most efficient way to do so was to feed users increasingly radicalized content. This had the unintended consequence of destroying society and democracy as we know it, no intent or sentience needed. (Again, I know we can argue about what the social media companies intended and the result, but you get the idea.)

So while we may not be headed for a machine sentience apocalypse, we may be headed for a narrow-AI caused apocalypse. I do think it is unlikely this will happen (meaning full civilization collapse), but it’s not impossible. There is also an entire spectrum of bad outcomes on the road to total collapse, and I think we are already experiencing some of it. This is a good time to get really thoughtful about how this new crop of AIs work, and what we are tasking them to do. They may not be sentient, but they act enough as if they are that it’s getting increasingly difficult to tell the difference, for good and for ill.