Sep 13 2012

Who’s To Blame for Bad Science News Reporting

The work of a science blogger is largely comprised of correcting and criticizing bad science news reporting. I therefore had a love-hate relationship with horrible science reporting – I simultaneously am annoyed and disgusted at the spread of misinformation by professionals who should know better, but delighted by the excellent blog fodder. In fact I see the role of the science blogger as largely filling the gap between scientists and journalists. We tend to be journalists with a strong science background, or scientists who have developed their skill at writing and communicating to the public.

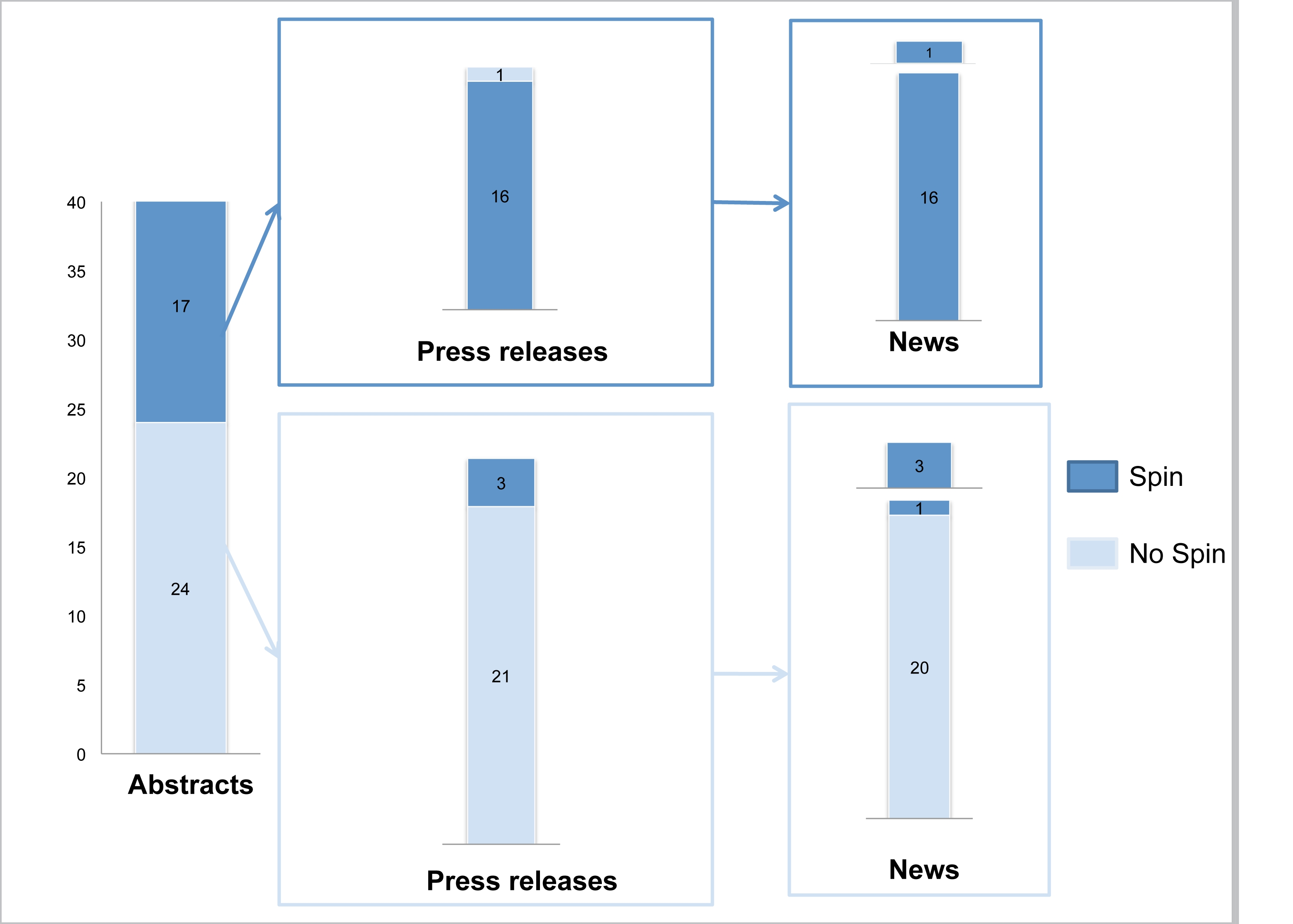

We spend a lot of time lambasting journalists for not doing their job, but in reality there are often three stages and parties involved in the creation of science news: the scientists themselves and the writing in the original paper, the press officers and the press releases they write, and then the journalists and their news articles. In each step of this process their is the potential to distort and exaggerate the findings of the study.

A new study published in PLOS Medicine examines the incidence of “spin” in medical news reporting (exaggerating the clinical effects or implications seen in the study being reported) and the factors that contribute to that spin. What they found is that the single biggest factor predicting the presence of spin in health news reporting is the presence of spin in the abstract of the article written by the scientists themselves. The authors conclude:

Our results highlight a tendency for press releases and the associated media coverage of RCTs to place emphasis on the beneficial effects of experimental treatments. This tendency is probably related to the presence of “spin” in conclusions of the scientific article’s abstract. This tendency, in conjunction with other well-known biases such as publication bias, selective reporting of outcomes, and lack of external validity, may be responsible for an important gap between the public perception of the beneficial effect and the real effect of the treatment studied.

We appear to have a synergy in positive biases – researchers are biased toward finding positive results, then emphasize their positive results in their article, journal editors are more likely to publish positive results and then send out press releases touting positive results, and finally reporters like to emphasize and sensationalize positive results. The end result of all of this positive bias is creating the false impression in the public – and even among medical professionals – that a treatment is effective, even when it may not be. (See, for example, my recent analysis of an acupuncture meta-analysis at Science-Based Medicine.)

The following chart tells the story of how much spin the researchers found when reviewing science press releases and reporting:

I am not surprised that this chain reaction of sensationalizing study results begins with the researchers themselves. Scientists have a responsibility not to overcall their own results when interpreting their data – but we know there is a tendency to do so. The process of peer-review is supposed to be a check on this tendency, and it is, but it’s imperfect and “spin” often slips through the cracks.

Scientists also need to spend more time and energy learning how to communicate with the media and the public about their results. Even when they are reserved in their official conclusions, they often oversell their results when speaking to the media, or allow press officers or journalists to goad them into making sensational statements about their research. Further, when a press officer adds spin, distorts or exaggerates the research findings, the scientists should make an effort to correct this.

In other words – scientists need to take a certain amount of responsibility for how their own research is presented to the public at every step of the process.

All of this does not let the press officers and science journalists off the hook. It is the job of the press officer to garner attention for the research published in a particular journal or by scientists in their institution, but they still have a responsibility to get the bottom line correct. Science journalists, however, have a responsibility to tell the real story, to talk to scientists who were not part of the study and get their opinion, to look for spin and point it out or correct it, and put the study results into some context.

What we often see, however, is journalists who republish press releases with little or no change, or simply taking the press release at face value with no investigative journalism at all. In short – they often do not do their jobs.

Obviously there are good science journalists out there who are doing their job, but they appear to be in the minority. The result is a massive bias toward sensationalized positive results. When reporting on medical findings this has immediate practical implications and people may often make medical decisions or seek specific treatments based on this reporting.

Conclusion

Bad science news reporting is a serious problem, as this recent study points out. This data also suggests that scientists themselves do have a significant influence over the ultimate reporting of their research, starting with how they interpret their own findings in the technical paper. Often it seems that scientists are not aware that they are writing to a broader public. Most of the time research papers will be read by relatively few people in a narrow field of research, and scientists are surprised and unprepared when their study gets picked up by the mainstream media.

An obvious solution is to give scientists more training in how to write conclusions that are responsible and do not lend themselves to hype, but also in how to deal with the media. There are efforts to do just that, but clearly we have a long way to go.